[ad_1]

You might have confronted a state of affairs the place your consumer reported excessive latency whereas your Cloud Spanner metrics did not present the corresponding latency. This text describes a case examine of elevated consumer facet latency attributable to session pool exhaustion and how one can diagnose the state of affairs through the use of OpenCensus options and Cloud Spanner consumer library for Go.

Case examine

Here is the overview of the experiment.

-

Go Consumer library model: 1.33.zero

-

SessionPoolConfig:

Through the use of the session pool, a number of learn/write brokers carry out transactions in parallel from a GCE VM in us-west2 to a Cloud Spanner DB in us-central1. Usually, you’re advisable to maintain your purchasers nearer to your Cloud Spanner DB for higher efficiency. On this case examine, a distant consumer machine is intentionally used in order that the community latency is extra distinguishable and classes are used for a good period of time with out overloading the consumer and servers.

As a preparation for the experiment, this pattern leaderboard schema was used. Versus utilizing random PlayerId between 1000000000 and 9000000000 within the pattern code, sequential ids from 1 to 1000 have been used as PlayerId on this experiment. When an agent ran a transaction, a random id between 1 and 1000 was chosen.

Learn agent:

-

(1) Randomly learn a single row, do an iteration, and finish the read-only transaction.

“SELECT * FROM Gamers p JOIN Scores s ON p.PlayerId = s.PlayerId WHERE p.PlayerId=@Id ORDER BY s.Rating DESC LIMIT 10” -

(2) Sleep 100 ms.

Write agent:

-

(1) Insert a row and finish the read-write transaction.

“INSERT INTO Scores(PlayerId, Rating, Timestamp) VALUES (@playerID, @rating, @timestamp)” -

(2) Sleep 100 ms.

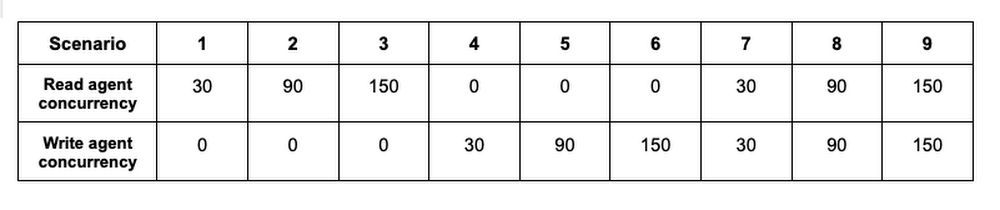

Every state of affairs had a special concurrency the place every agent continued to repeat (1) ~ (2) for 10 minutes. There was a 15 minute interval between Three and four, and between 6 and seven, by which you will see the session pool shrink conduct.

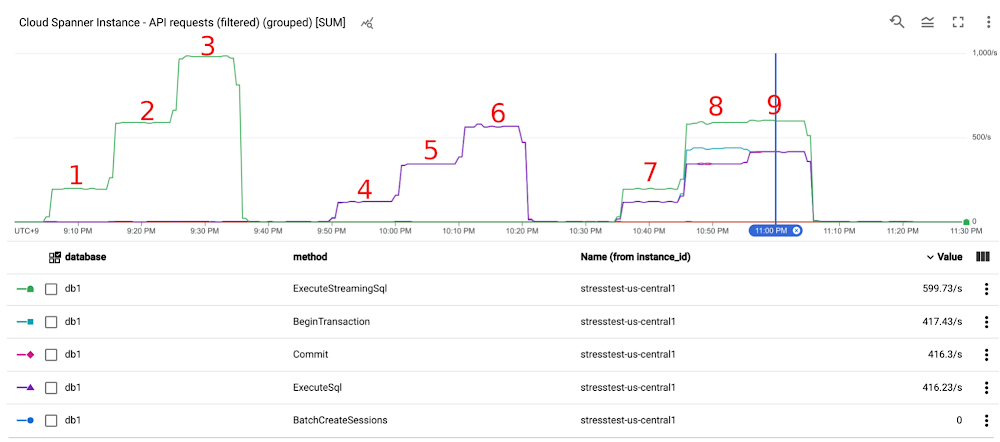

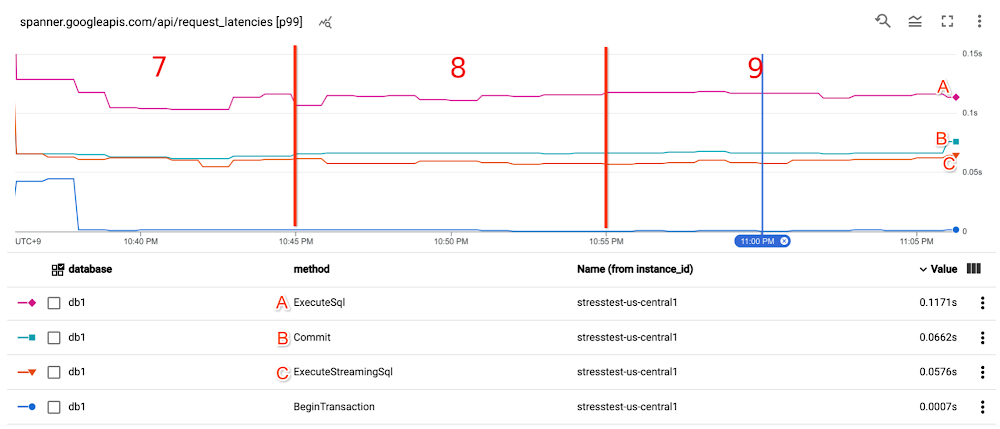

The next graph reveals the throughput (requests per second). Till Situation eight, the throughput elevated proportionally. Nevertheless, in Situation 9, the throughput did not improve as anticipated. And server facet metrics do not point out that the bottleneck is on the server facet.

Cloud Spanner API request latency (spanner.googleapis.com/api/request_latencies) was steady for ExecuteSql requests and Commit requests all through the experiment, principally lower than zero.07s at p99.

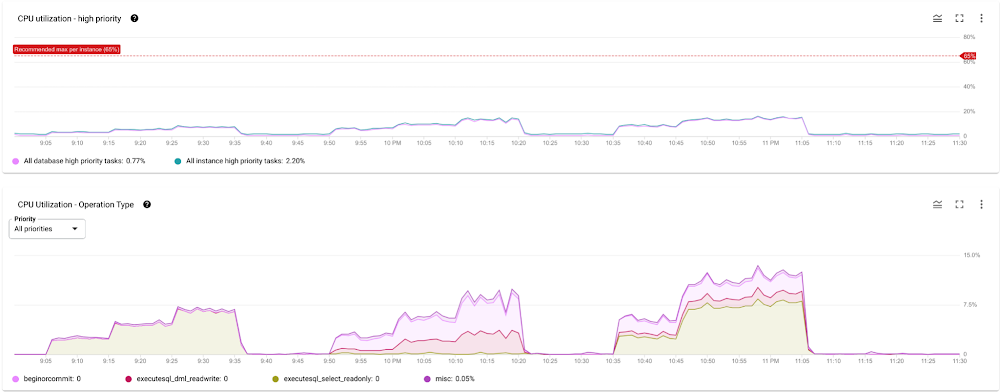

Occasion CPU utilization was low and beneath the advisable fee (excessive precedence < 65%) on a regular basis.

Find out how to allow OpenCensus metrics and traces

Earlier than wanting into precise OpenCensus examples and different metrics, let’s have a look at how you need to use OpenCensus with the Cloud Spanner library in Go.

As a easy method, you are able to do this manner.

- code_block

- [StructValue([(u’code’, u’package mainrnrnimport (rnt”context”rnt”fmt”rnt”log”rnt”time”rnrnt”cloud.google.com/go/spanner”rnt”contrib.go.opencensus.io/exporter/stackdriver”rnt”go.opencensus.io/stats”rnt”go.opencensus.io/stats/view”rnt”go.opencensus.io/tag”rnt”go.opencensus.io/trace”rn)rnrnfunc main() rntvar (rnttprojectID = “YOUR_PROJECT”rnttinstanceID = “YOUR_INSTNACE”rnttdatabaseID = “YOUR_DB”rnt)rnrntif err := spanner.EnableGfeLatencyView(); err != nil rnttlog.Fatalf(“Failed to enable Spanner GFE latency view: %v”, err)rntrnt// You may need to use the session pool for more than a minute so that the health checker reports some stats.rntif err := spanner.EnableStatViews(); err != nil rnrntexporter, err := stackdriver.NewExporter(rnttstackdriver.OptionsrntttProjectID: projectID,rntttMetricPrefix: “spanner-observability-demo”,rntt)rntif err != nil rnttlog.Fatal(err)rntrntdefer exporter.Flush()rnrntview.RegisterExporter(exporter)rnttrace.RegisterExporter(exporter)rnttrace.ApplyConfig(trace.Config) // Or adjust the sampling rate, e.g. trace.ProbabilitySampler(0.05)rnrntif err := exporter.StartMetricsExporter(); err != nil rnttlog.Fatal(err)rntrntdefer exporter.StopMetricsExporter()rnrnt// Add the 2nd section here.rn’), (u’language’, u”), (u’caption’, <wagtail.wagtailcore.rich_text.RichText object at 0x3e036ce88050>)])]

-

spanner.EnableGfeLatencyView()

-

This permits the GFELatency metric, which is described in Use GFE Server-Timing Header in Cloud Spanner Debugging.

-

spanner.EnableStatViews()

-

This permits all session pool metrics, that are described in Troubleshooting software efficiency on Cloud Spanner with OpenCensus.

Moreover, you might wish to register and seize your individual latency metrics. Within the code beneath, that’s “my_custom_spanner_api_call_applicaiton_latency”, and the captured latency is the time distinction between the beginning and the tip of a transaction. In different phrases, that is the end-to-end Cloud Spanner API name latency from a consumer software’s perspective. On the backside of the principle perform above, you may add the next code.

- code_block

- [StructValue([(u’code’, u’// Add the 2nd section here.rntvar (rnttspanApiCallLatencyMs = stats.Int64(“my_custom_spanner_api_call_latency_ms”, “Spanner API call latency”, stats.UnitMilliseconds)rntttagKeyDatabase = tag.MustNewKey(“database”)rntttagKeyInstance = tag.MustNewKey(“instance_id”)rntttagKeyOp = tag.MustNewKey(“op”)rnt)rntv := &view.ViewrnttName: “my_custom_spanner_api_call_applicaiton_latency”,rnttMeasure: spanApiCallLatencyMs,rnttDescription: “The distribution of the latencies captured in the app code”,rnttAggregation: view.Distribution(0.0, 0.01, 0.05, 0.1, 0.3, 0.6, 0.8, 1.0, 2.0, 3.0, 4.0, 5.0, 6.0, 8.0, 10.0, 13.0,rnttt16.0, 20.0, 25.0, 30.0, 40.0, 50.0, 65.0, 80.0, 100.0, 130.0, 160.0, 200.0, 250.0, 300.0, 400.0, 500.0, 650.0, 800.0,rnttt1000.0, 2000.0, 5000.0, 10000.0, 20000.0, 50000.0, 100000.0),rnttTagKeys: []tag.KeytagKeyDatabase, tagKeyInstance, tagKeyOp,rntrntif err := view.Register(v); err != nil rnt// Add the third part right here.’), (u’language’, u”), (u’caption’, <wagtail.wagtailcore.rich_text.RichText object at 0x3e036c9a0c10>)])]

After that you would be able to measure the latency like this.

- code_block

- [StructValue([(u’code’, u’// Add the 3rd section here.rntdb := fmt.Sprintf(“projects/%s/instances/%s/databases/%s”, projectID, instanceID, databaseID)rntctx := context.Background()rntclient, err := spanner.NewClientWithConfig(ctx, db, spanner.ClientConfig)rntif err != nil rnttlog.Fatal(err)rntrntdefer client.Close()rnrntnow := time.Now()rntstmt := spanner.NewStatement(“SELECT * FROM Players p JOIN Scores s ON p.PlayerId = s.PlayerId WHERE p.PlayerId=@Id ORDER BY s.Score DESC LIMIT 10”)rntstmt.Params[“Id”] = 1rntiter := consumer.Single().Question(ctx, stmt)rntif err = iter.Do(func(r *spanner.Row) error ); err != nil rntlatencyMs := time.Since(now).Milliseconds()rntocctx, err := tag.New(ctx, tag.Insert(tagKeyDatabase, databaseID), tag.Insert(tagKeyInstance, instanceID), tag.Insert(tagKeyOp, “write”))rntif err != nil rntstats.Document(occtx, spanApiCallLatencyMs.M(latencyMs))rnrntnow = time.Now()rnt_, err = consumer.ReadWriteTransaction(ctx, func(ctx context.Context, txn *spanner.ReadWriteTransaction) error rnttstmt := spanner.Assertionrntt_, err := txn.Replace(ctx, stmt)rnttif err != nil rnttreturn nilrnt)rntif err != nil rntlatencyMs = time.Since(now).Milliseconds()rntocctx, err = tag.New(ctx, tag.Insert(tagKeyDatabase, databaseID), tag.Insert(tagKeyInstance, instanceID), tag.Insert(tagKeyOp, “write”))rntif err != nil rntstats.Document(occtx, spanApiCallLatencyMs.M(latencyMs))rnttime.Sleep(time.Second * 10) // Wait a while in order that stats are despatched. This isn’t wanted in case your program lasts longer.’), (u’language’, u”), (u’caption’, <wagtail.wagtailcore.rich_text.RichText object at 0x3e036cd02a50>)])]

Observations from the case examine.

Let’s test the collected stats from the server facet to the consumer. Within the diagram beneath, which is from Cloud Spanner end-to-end latency information, let’s test the outcomes from four -> Three -> 2 -> 1.

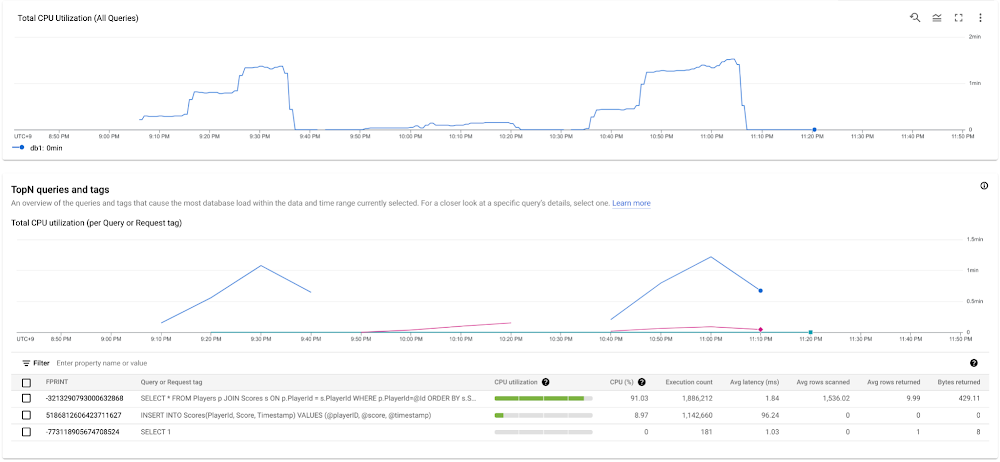

Question latency

One of many handy approaches to visualise Question latency is to make use of Question insights. You may get the overview of the queries and tags that trigger essentially the most database load throughout the chosen time vary. On this state of affairs, Avg latency (ms) for the learn requests was 1.84. Be aware that the “SELECT 1” queries are issued from the session pool library to maintain the classes alive.

For extra particulars, you need to use Question statistics. Sadly, mutation information is just not out there in these instruments, however should you’re involved about lock conflicts along with your mutations, you may test Lock statistics.

Cloud Spanner API request latency

This latency metric has an enormous hole in comparison with the question latency in Question Insights above. Be aware that INSERT question includes ExecuteSql name and Commit name, as you may see them in a hint later. Additionally word that ExecuteSql has some noise because of session administration requests. There are 2 major causes for the latency hole between Question latency and Cloud Spanner API request latency. Firstly, Question Insights reveals common latency, whereas the stats beneath are at 99th percentile. Secondly, the Cloud Spanner API Entrance Finish was removed from the Cloud Spanner Database. The community latency between us-west2 and us-central1 is the key latency issue.

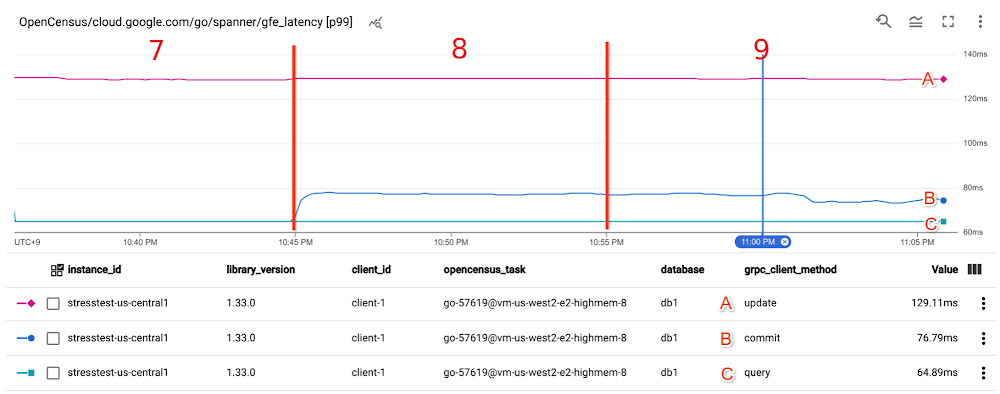

Google Entrance Finish latency

GFE latency metrics (OpenCensus/cloud.google.com/go/spanner/gfe_latency) principally reveals the identical because the Cloud Spanner API request latency.

By evaluating Cloud Spanner API request latency and Google Entrance Finish latency, you may interpret that there wasn’t any main latency hole between Google Frontend and Cloud Spanner API Entrance Finish all through the experiment.

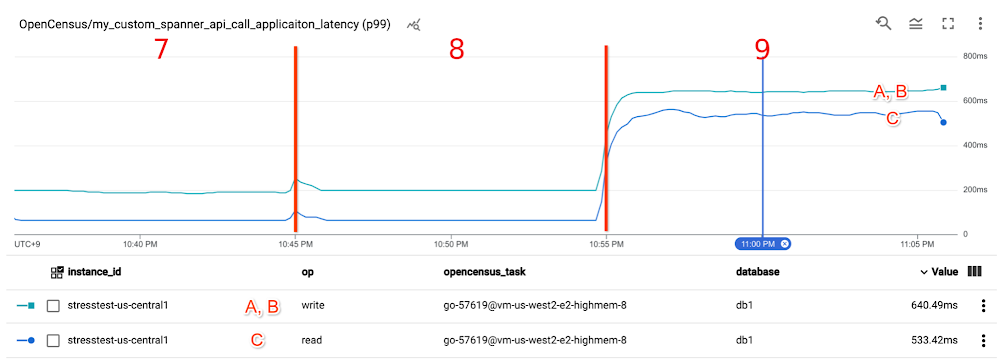

[Client] Customized metrics

my_custom_spanner_api_call_applicaiton_latencyreveals a lot increased latency within the final state of affairs. As you noticed earlier, the server facet metrics Cloud Spanner API request latency and Google Entrance Finish latency did not present such excessive latency at 11:00 PM.

[Client] Session pool metrics

The definition of every metric is as follows:

-

num_in_use_sessions: the variety of classes used.

-

num_read_sessions: the variety of idle classes ready for read-only transactions.

-

num_write_prepared_sessions: the variety of idle classes ready for read-write transactions

-

num_sessions_being_prepared: the variety of classes that at that second is getting used to execute a BeginTransaction name. The quantity is decreased as soon as the BeginTransaction name has completed. (Be aware that this isn’t utilized in Java and C++ consumer libraries. These libraries take the so-called Inlined BeginTransactions mechanism, and do not put together transactions beforehand.)

Within the final state of affairs at round 11:00 PM, you may see that num_in_use_sessions have been at all times near the max of 100, and a few have been in num_sessions_being_prepared. Which means the session pool did not have any idle classes all through the final state of affairs.

With all issues thought of, you may assume that the excessive latency in my_custom_spanner_api_call_applicaiton_latency (i.e. end-to-end name latency captured within the software code) was as a result of lack of idle classes within the session pool.

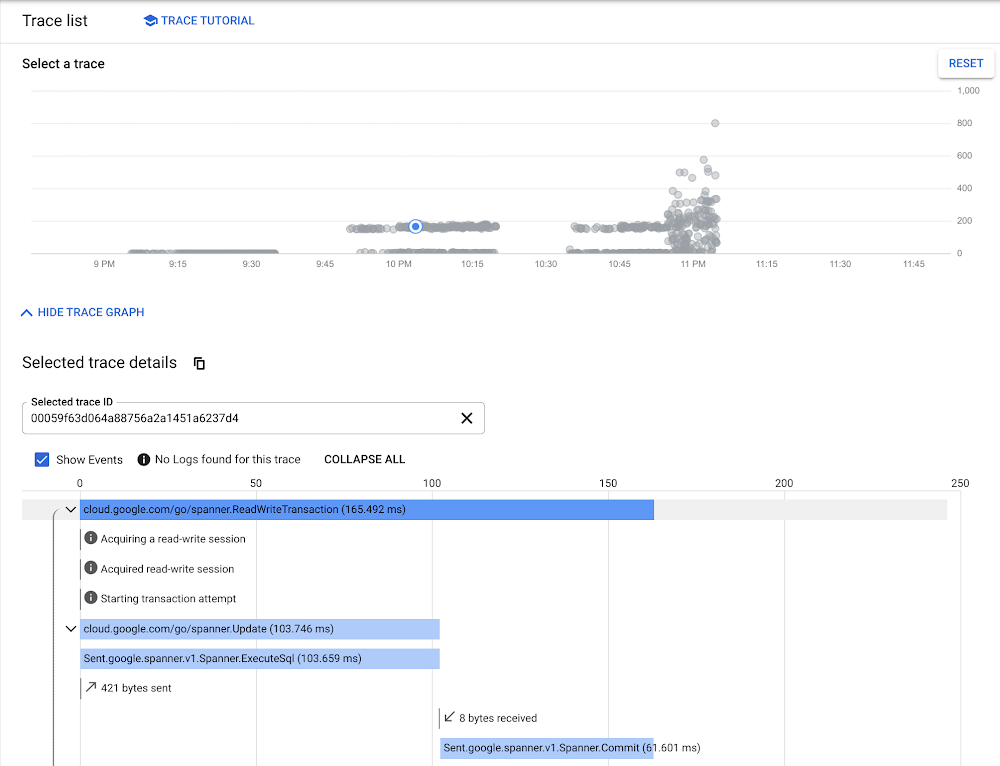

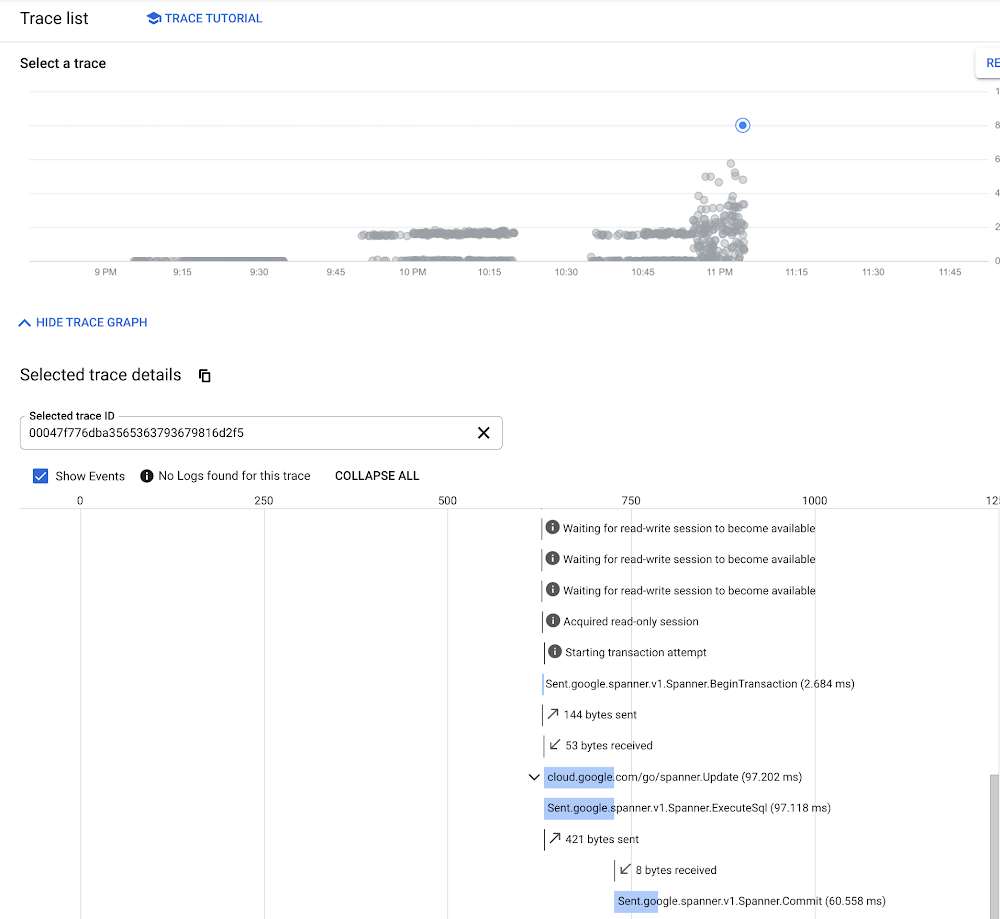

Cloud Hint

You possibly can double-check the idea by wanting into Cloud Hint. In an earlier state of affairs at round 10 PM, you may affirm within the hint occasions that “Acquired read-write session” occurred as quickly as ReadWriteTransaction began.

Alternatively, within the final state of affairs at round 11:00 PM,the next hint tells that the transaction was sluggish as a result of it could not purchase a read-write session. “Ready for read-write session to change into out there” repeated. Additionally, as a result of the transaction was solely capable of get a read-session “Acquired read-only session”, it wanted to start out a read-write transaction — making a BeginTransaction name.

Remarks

There are some remarks within the case examine and checking metrics:

1) There have been some latency fluctuations in ExecuteSql and ExecuteStreqmingSql. It’s because, when an ExecuteSql or ExecuteStreamingSql is made, PrepareQuery is executed internally and the compiled question plan and execution plan are cached. (See Behind the scenes of Cloud Spanner’s ExecuteQuery request). The cache occurs on the Cloud Spanner API layer. If requests undergo a special server, the server must cache them. The PrepareQuery name provides a further spherical journey between the Cloud Spanner API Entrance Finish and Cloud Spanner Database.

2) If you take a look at metrics, particularly at lengthy tail (e.g. 99th percentile), you might even see some unusual latency outcomes the place server facet latency appears to be like longer than consumer latency. This does not usually occur, nevertheless it might occur due to totally different sampling strategies, akin to interval, timing, distribution of the measurement factors. Chances are you’ll wish to test extra normal stats akin to 95th percentile or 50th percentile if you wish to test the final pattern.

Three) In comparison with the Google Entrance Finish latency, the consumer facet metric — my_custom_spanner_api_call_applicaiton_latency for example — accommodates not solely the time to amass a session from the pool, but in addition numerous latency elements. A few of the different latency elements are:

-

Computerized retries in opposition to aborted transactions or some gRPC errors, relying in your consumer library. You possibly can configure customized timeouts and retries.

-

Create further classes (You may see BatchCreateSessions latency spikes in Cloud Spanner API request latency).

-

BeginTransaction for read-write transactions. They’re routinely ready for the read-write classes within the pool. BeginTransaction calls may occur if read-write transactions want to make use of learn classes because of lack of read-write classes, or transactions for the read-write classes are invalid or aborted.

-

Spherical journey time between the consumer and Google Entrance Finish.

-

Establishing a connection at TCP stage (TCP Three-way handshake) and at TLS stage (TLS handshake).

In case your session pool metrics do not point out an exhaustion situation, you might must test different features.

four) You might even see ExecuteSql calls exterior of your software code. These are possible the ping requests by the session pool library to maintain the classes alive.

5) In case your consumer performs read-write transactions very often and should you usually see your read-write transaction request acquires a read-only session in your hint, setting the next WriteSessions ratio can be useful as a result of a read-write session calls BeginTransaction beforehand earlier than the session is definitely used. Handle the write-sessions fraction explains the default values for every library. Alternatively, if the site visitors quantity is low and classes are sometimes idle for a very long time in your consumer, setting WriteSessions ratio to zero can be extra useful. If the classes should not used a lot, it is potential that the ready transaction is already invalid and will get aborted. The price of the failure is excessive in comparison with making ready read-write transactions beforehand.

6) A BatchCreateSessions request is a expensive and time-consuming operation. Due to this fact, it is advisable to set the identical worth to MinOpened and MaxOpened for the session pool.

Conclusion

Through the use of customized metrics and traces, you may slim down the consumer facet latency trigger which isn’t seen within the default server facet metrics. I hope this text will enable you to determine an actual trigger extra effectively than earlier than.

[ad_2]

Source link