[ad_1]

|

In March 2023, AWS and NVIDIA introduced a multipart collaboration targeted on constructing probably the most scalable, on-demand synthetic intelligence (AI) infrastructure optimized for coaching more and more advanced giant language fashions (LLMs) and growing generative AI purposes.

We preannounced Amazon Elastic Compute Cloud (Amazon EC2) P5 situations powered by NVIDIA H100 Tensor Core GPUs and AWS’s newest networking and scalability that may ship as much as 20 exaflops of compute efficiency for constructing and coaching the most important machine studying (ML) fashions. This announcement is the product of greater than a decade of collaboration between AWS and NVIDIA, delivering the visible computing, AI, and excessive efficiency computing (HPC) clusters throughout the Cluster GPU (cg1) situations (2010), G2 (2013), P2 (2016), P3 (2017), G3 (2017), P3dn (2018), G4 (2019), P4 (2020), G5 (2021), and P4de situations (2022).

Most notably, ML mannequin sizes at the moment are reaching trillions of parameters. However this complexity has elevated clients’ time to coach, the place the most recent LLMs at the moment are educated over the course of a number of months. HPC clients additionally exhibit related tendencies. With the constancy of HPC buyer information assortment growing and information units reaching exabyte scale, clients are in search of methods to allow quicker time to resolution throughout more and more advanced purposes.

Introducing EC2 P5 Cases

At this time, we’re saying the overall availability of Amazon EC2 P5 situations, the next-generation GPU situations to handle these buyer wants for prime efficiency and scalability in AI/ML and HPC workloads. P5 situations are powered by the most recent NVIDIA H100 Tensor Core GPUs and can present a discount of as much as 6 occasions in coaching time (from days to hours) in comparison with earlier technology GPU-based situations. This efficiency improve will allow clients to see as much as 40 p.c decrease coaching prices.

P5 situations present eight x NVIDIA H100 Tensor Core GPUs with 640 GB of excessive bandwidth GPU reminiscence, third Gen AMD EPYC processors, 2 TB of system reminiscence, and 30 TB of native NVMe storage. P5 situations additionally present 3200 Gbps of mixture community bandwidth with assist for GPUDirect RDMA, enabling decrease latency and environment friendly scale-out efficiency by bypassing the CPU on internode communication.

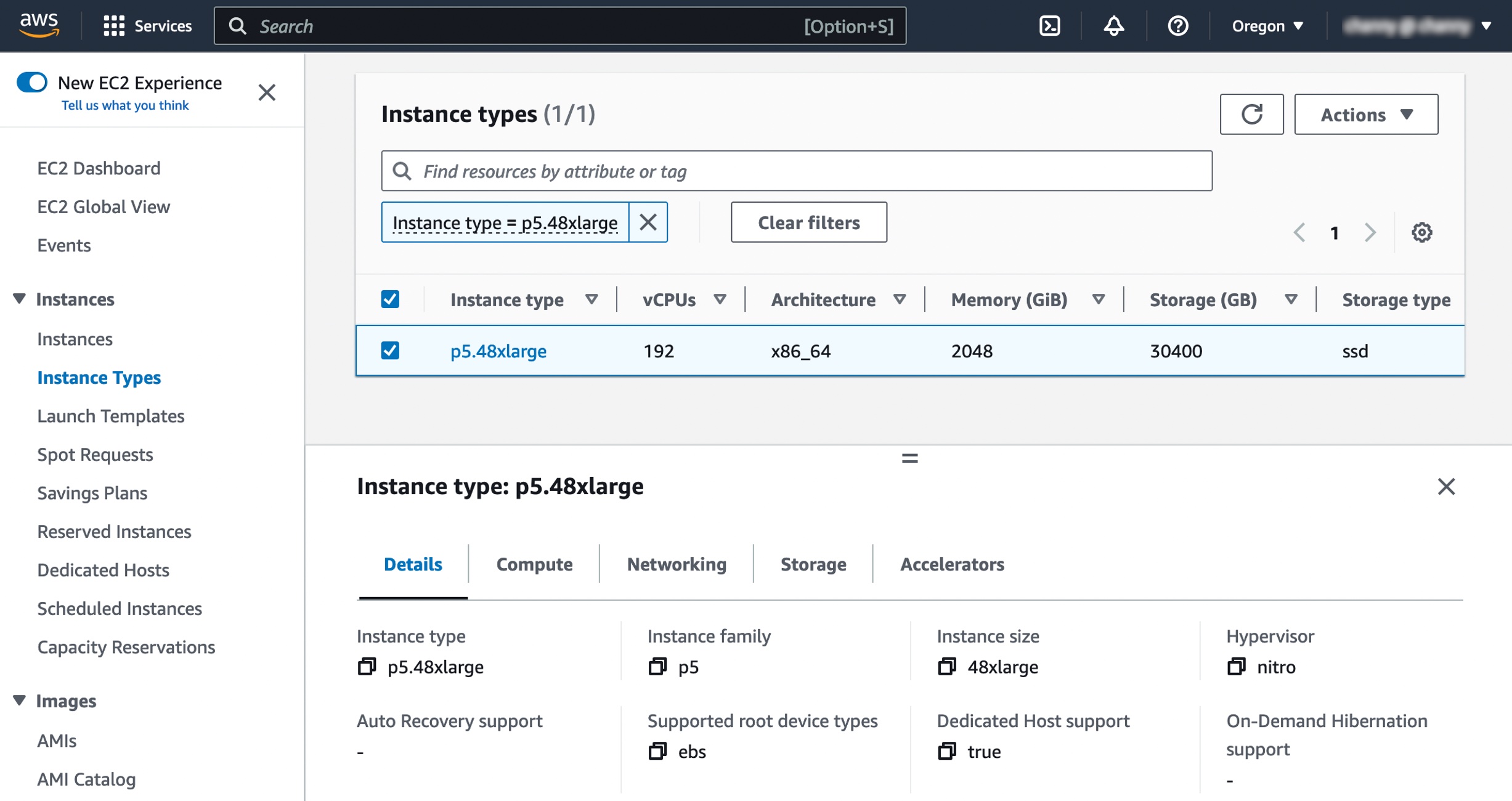

Right here is the specs for this occasion:

| Occasion Measurement | vCPUs | Reminiscence (GiB) | GPUs (H100) | Community Bandwidth (Gbps) | EBS Bandwidth (Gbps) | Native Storage (TB) |

| p5.48xlarge | 192 | 2048 | eight | 3200 | 80 | eight x three.84 |

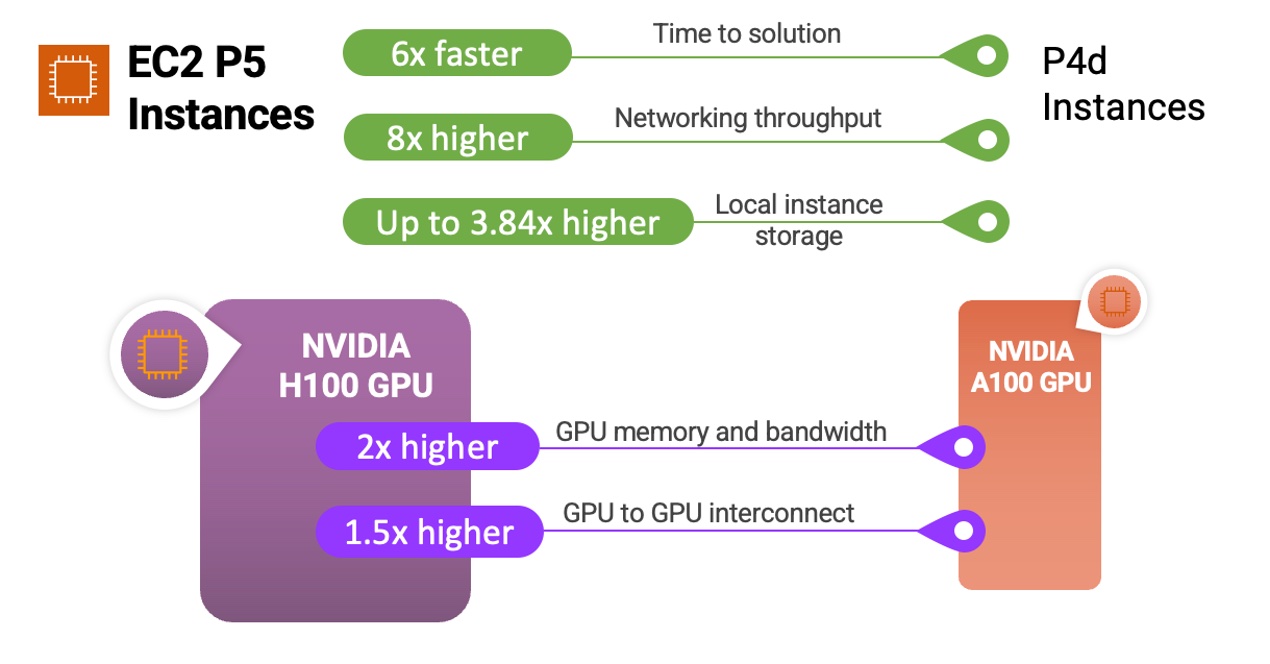

Right here’s a fast infographic that exhibits you the way the P5 situations and NVIDIA H100 Tensor Core GPUs examine to earlier situations and processors:

P5 situations are perfect for coaching and operating inference for more and more advanced LLMs and pc imaginative and prescient fashions behind probably the most demanding and compute-intensive generative AI purposes, together with query answering, code technology, video and picture technology, speech recognition, and extra. P5 will present as much as 6 occasions decrease time to coach in contrast with earlier technology GPU-based situations throughout these purposes. Prospects who can use decrease precision FP8 information sorts of their workloads, frequent in lots of language fashions that use a transformer mannequin spine, will see additional profit at as much as 6 occasions efficiency improve by means of assist for the NVIDIA transformer engine.

HPC clients utilizing P5 situations can deploy demanding purposes at better scale in pharmaceutical discovery, seismic evaluation, climate forecasting, and monetary modeling. Prospects utilizing dynamic programming (DP) algorithms for purposes like genome sequencing or accelerated information analytics can even see additional profit from P5 by means of assist for a brand new DPX instruction set.

This allows clients to discover drawback areas that beforehand appeared unreachable, iterate on their options at a quicker clip, and get to market extra rapidly.

You may see the element of occasion specs together with comparisons of occasion sorts between p4d.24xlarge and new p5.48xlarge under:

| Function | p4d.24xlarge | p5.48xlarge | Comparability |

| Quantity & Kind of Accelerators | eight x NVIDIA A100 | eight x NVIDIA H100 | – |

| FP8 TFLOPS per Server | – | 16,000 | 6.4x vs.A100 FP16 |

| FP16 TFLOPS per Server | 2,496 | eight,000 | |

| GPU Reminiscence | 40 GB | 80 GB | 2x |

| GPU Reminiscence Bandwidth | 12.eight TB/s | 26.eight TB/s | 2x |

| CPU Household | Intel Cascade Lake | AMD Milan | – |

| vCPUs | 96 | 192 | 2x |

| Whole System Reminiscence | 1152 GB | 2048 GB | 2x |

| Networking Throughput | 400 Gbps | 3200 Gbps | 8x |

| EBS Throughput | 19 Gbps | 80 Gbps | 4x |

| Native Occasion Storage | eight TBs NVMe | 30 TBs NVMe | three.75x |

| GPU to GPU Interconnect | 600 GB/s | 900 GB/s | 1.5x |

Second-generation Amazon EC2 UltraClusters and Elastic Cloth Adaptor

P5 situations present market-leading scale-out functionality for multi-node distributed coaching and tightly coupled HPC workloads. They provide as much as three,200 Gbps of networking utilizing the second-generation Elastic Cloth Adaptor (EFA) expertise, eight occasions in contrast with P4d situations.

To deal with buyer wants for large-scale and low latency, P5 situations are deployed within the second-generation EC2 UltraClusters, which now present clients with decrease latency throughout as much as 20,000+ NVIDIA H100 Tensor Core GPUs. Offering the most important scale of ML infrastructure within the cloud, P5 situations in EC2 UltraClusters ship as much as 20 exaflops of mixture compute functionality.

EC2 UltraClusters use Amazon FSx for Lustre, totally managed shared storage constructed on the most well-liked high-performance parallel file system. With FSx for Lustre, you possibly can rapidly course of large datasets on demand and at scale and ship sub-millisecond latencies. The low-latency and high-throughput traits of FSx for Lustre are optimized for deep studying, generative AI, and HPC workloads on EC2 UltraClusters.

FSx for Lustre retains the GPUs and ML accelerators in EC2 UltraClusters fed with information, accelerating probably the most demanding workloads. These workloads embody LLM coaching, generative AI inferencing, and HPC workloads, equivalent to genomics and monetary threat modeling.

Getting Began with EC2 P5 Cases

To get began, you need to use P5 situations within the US East (N. Virginia) and US West (Oregon) Area.

When launching P5 situations, you’ll select AWS Deep Studying AMIs (DLAMIs) to assist P5 situations. DLAMI offers ML practitioners and researchers with the infrastructure and instruments to rapidly construct scalable, safe distributed ML purposes in preconfigured environments.

It is possible for you to to run containerized purposes on P5 situations with AWS Deep Studying Containers utilizing libraries for Amazon Elastic Container Service (Amazon ECS) or Amazon Elastic Kubernetes Service (Amazon EKS). For a extra managed expertise, you may also use P5 situations through Amazon SageMaker, which helps builders and information scientists simply scale to tens, a whole bunch, or hundreds of GPUs to coach a mannequin rapidly at any scale with out worrying about organising clusters and information pipelines. HPC clients can leverage AWS Batch and ParallelCluster with P5 to assist orchestrate jobs and clusters effectively.

Present P4 clients might want to replace their AMIs to make use of P5 situations. Particularly, you’ll need to replace your AMIs to incorporate the most recent NVIDIA driver with assist for NVIDIA H100 Tensor Core GPUs. They can even want to put in the most recent CUDA model (CUDA 12), CuDNN model, framework variations (e.g., PyTorch, Tensorflow), and EFA driver with up to date topology recordsdata. To make this course of straightforward for you, we are going to present new DLAMIs and Deep Studying Containers that come prepackaged with all of the wanted software program and frameworks to make use of P5 situations out of the field.

Now Out there

Amazon EC2 P5 situations can be found at present in AWS Areas: US East (N. Virginia) and US West (Oregon). For extra data, see the Amazon EC2 pricing web page. To study extra, see EC2 P5 occasion web page and ship suggestions to AWS re:Put up for EC2 or by means of your normal AWS Assist contacts.

You may select a broad vary of AWS providers which have generative AI inbuilt, all operating on probably the most cost-effective cloud infrastructure for generative AI. To study extra, go to Generative AI on AWS to innovate quicker and reinvent your purposes.

— Channy

[ad_2]

Source link