[ad_1]

|

Final December, Sébastien Stormacq wrote concerning the availability of a distributed map state for AWS Step Features, a brand new characteristic that means that you can orchestrate large-scale parallel workloads within the cloud. That’s when Charles Burton, a knowledge methods engineer for an organization known as CyberGRX, discovered about it and refactored his workflow, decreasing the processing time for his machine studying (ML) processing job from eight days to 56 minutes. Earlier than, working the job required an engineer to continuously monitor it; now, it runs in lower than an hour with no help wanted. As well as, the brand new implementation with AWS Step Features Distributed Map prices lower than what it did initially.

What CyberGRX achieved with this resolution is an ideal instance of what serverless applied sciences embrace: letting the cloud do as a lot of the undifferentiated heavy lifting as attainable so the engineers and information scientists have extra time to concentrate on what’s necessary for the enterprise. On this case, which means persevering with to enhance the mannequin and the processes for one of many key choices from CyberGRX, a cyber threat evaluation of third events utilizing ML insights from its giant and rising database.

What’s the enterprise problem?

CyberGRX shares third-party cyber threat (TPCRM) information with their clients. They predict, with excessive confidence, how a third-party firm will reply to a threat evaluation questionnaire. To do that, they should run their predictive mannequin on each firm of their platform; they at the moment have predictive information on greater than 225,00zero firms. Each time there’s a brand new firm or the info adjustments for an organization, they regenerate their predictive mannequin by processing their complete dataset. Over time, CyberGRX information scientists enhance the mannequin or add new options to it, which additionally requires the mannequin to be regenerated.

The problem is working this job for 225,00zero firms in a well timed method, with as few hands-on assets as attainable. The job runs a set of operations for every firm, and each firm calculation is impartial of different firms. Which means that within the preferrred case, each firm may be processed on the identical time. Nonetheless, implementing such a large parallelization is a difficult downside to unravel.

First iteration

With that in thoughts, the corporate constructed their first iteration of the pipeline utilizing Kubernetes and Argo Workflows, an open-source container-native workflow engine for orchestrating parallel jobs on Kubernetes. These have been instruments they have been acquainted with, as they have been already utilizing them of their infrastructure.

However as quickly as they tried to run the job for all the businesses on the platform, they ran up towards the bounds of what their system may deal with effectively. As a result of the answer relied on a centralized controller, Argo Workflows, it was not sturdy, and the controller was scaled to its most capability throughout this time. At the moment, they solely had 150,00zero firms. And working the job with the entire firms took round eight days, throughout which the system would crash and must be restarted. It was very labor intensive, and it at all times required an engineer on name to observe and troubleshoot the job.

The tipping level got here when Charles joined the Analytics staff in the beginning of 2022. One among his first duties was to do a full mannequin run on roughly 170,00zero firms at the moment. The mannequin run lasted the entire week and ended at 2:00 AM on a Sunday. That’s when he determined their system wanted to evolve.

Second iteration

With the ache of the final time he ran the mannequin recent in his thoughts, Charles thought by means of how he may rewrite the workflow. His first thought was to make use of AWS Lambda and SQS, however he realized that he wanted an orchestrator in that resolution. That’s why he selected Step Features, a serverless service that helps you automate processes, orchestrate microservices, and create information and ML pipelines; plus, it scales as wanted.

Charles acquired the brand new model of the workflow with Step Features working in about 2 weeks. Step one he took was adapting his current Docker picture to run in Lambda utilizing Lambda’s container picture packaging format. As a result of the container already labored for his information processing duties, this replace was easy. He scheduled Lambda provisioned concurrency to make it possible for all features he wanted have been prepared when he began the job. He additionally configured reserved concurrency to make it possible for Lambda would be capable of deal with this most variety of concurrent executions at a time. With a view to help so many features executing on the identical time, he raised the concurrent execution quota for Lambda per account.

And to make it possible for the steps have been run in parallel, he used Step Features and the map state. The map state allowed Charles to run a set of workflow steps for every merchandise in a dataset. The iterations run in parallel. As a result of Step Features map state presents 40 concurrent executions and CyberGRX wanted extra parallelization, they created an answer that launched a number of state machines in parallel; on this approach, they have been capable of iterate quick throughout all the businesses. Creating this complicated resolution, required a preprocessor that dealt with the heuristics of the concurrency of the system and break up the enter information throughout a number of state machines.

This second iteration was already higher than the primary one, as now it was capable of end the execution with no issues, and it may iterate over 200,00zero firms in 90 minutes. Nonetheless, the preprocessor was a really complicated a part of the system, and it was hitting the bounds of the Lambda and Step Features APIs because of the quantity of parallelization.

Third and ultimate iteration

Then, throughout AWS re:Invent 2022, AWS introduced a distributed map for Step Features, a brand new kind of map state that means that you can write Step Features to coordinate large-scale parallel workloads. Utilizing this new characteristic, you’ll be able to simply iterate over tens of millions of objects saved in Amazon Easy Storage Service (Amazon S3), after which the distributed map can launch as much as 10,00zero parallel sub-workflows to course of the info.

When Charles learn within the Information Weblog article concerning the 10,00zero parallel workflow executions, he instantly thought of making an attempt this new state. In a few weeks, Charles constructed the brand new iteration of the workflow.

As a result of the distributed map state break up the enter into completely different processors and dealt with the concurrency of the completely different executions, Charles was capable of drop the complicated preprocessor code.

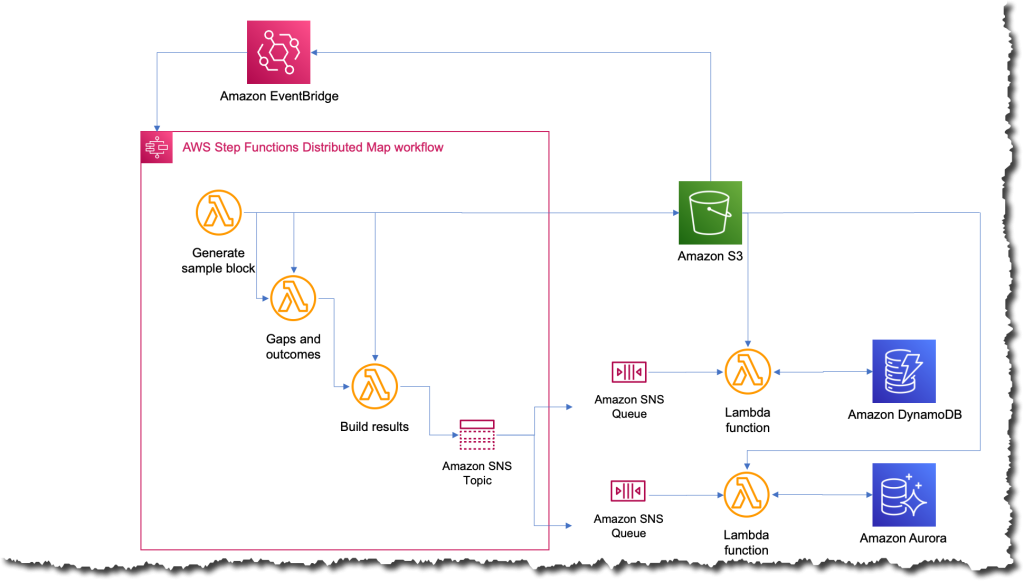

The brand new course of was the best that it’s ever been; now every time they need to run the job, they only add a file to Amazon S3 with the enter information. This motion triggers an Amazon EventBridge rule that targets the state machine with the distributed map. The state machine then executes with that file as an enter and publishes the outcomes to an Amazon Easy Notification Service (Amazon SNS) matter.

What was the affect?

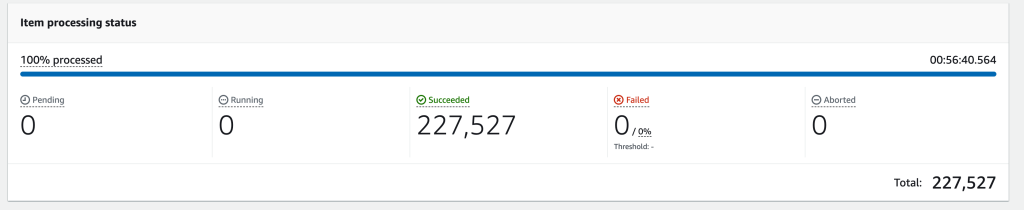

Just a few weeks after finishing the third iteration, they needed to run the job on all 227,00zero firms of their platform. When the job completed, Charles’ staff was blown away; the entire course of took solely 56 minutes to finish. They estimated that in these 56 minutes, the job ran greater than 57 billion calculations.

The next picture reveals an Amazon CloudWatch graph of the concurrent executions for one Lambda operate through the time that the workflow was working. There are nearly 10,00zero features working in parallel throughout this time.

Simplifying and shortening the time to run the job opens lots of prospects for CyberGRX and the info science staff. The advantages began immediately the second one of many information scientists needed to run the job to check some enhancements they’d made for the mannequin. They have been capable of run it independently with out requiring an engineer to assist them.

And, as a result of the predictive mannequin itself is likely one of the key choices from CyberGRX, the corporate now has a extra aggressive product for the reason that predictive evaluation may be refined every day.

Be taught extra about utilizing AWS Step Features:

You too can verify the Serverless Workflows Assortment that we’ve got out there in Serverless Land so that you can check and be taught extra about this new functionality.

— Marcia

[ad_2]

Source link