[ad_1]

At the moment we’re excited to announce the discharge of over twenty new BigQuery and BigQuery ML (BQML) operators for Vertex AI Pipelines, that assist make it simpler to operationalize BigQuery and BQML jobs in a Vertex AI Pipeline. The primary 5 BigQuery and BQML pipeline elements had been launched earlier this 12 months. These twenty-one new, first-party, Google Cloud-supported elements assist information scientists, information engineers, and different customers incorporate all of Google Cloud’s BQML capabilities together with forecasting, explainable AI, and MLOps.

The seamless integration between BQML and Vertex AI helps automate and monitor your entire mannequin life cycle of BQML fashions from coaching to serving. Builders, particularly ML engineers, now not have to put in writing bespoke code as a way to embody BQML workflows of their ML pipelines, they’ll now merely embody these new BQML elements of their pipelines natively, making it simpler and quicker to deploy end-to-end ML lifecycle pipelines.

As well as, utilizing these elements as a part of a Vertex AI Pipelines offers information and mannequin governance. Any time a pipeline is executed, Vertex AI Pipelines tracks and manages any artifacts produced robotically.

For BigQuery, the next elements can be found:

|

BigQuery |

||

|---|---|---|

|

Class |

Part |

Description |

|

Question |

BigqueryQueryJobOp |

Permits customers to submit an arbitrary BQ question which is able to both be written to a brief or everlasting desk. Launches a BigQuery question job and waits for it to complete. |

For BigQuery ML (BQML), the next elements are actually accessible:

|

BigQuery ML |

||

|---|---|---|

|

Class |

Part |

Description |

|

Core |

BigqueryCreateModelJobOp |

Permit customers to submit a DDL assertion to create a BigQuery ML mannequin. |

|

BigqueryEvaluateModelJobOp |

Permits customers to judge a BigQuery ML mannequin. |

|

|

BigqueryPredictModelJobOp |

Permits customers to make predictions utilizing a BigQuery ML mannequin. |

|

|

BigqueryExportModelJobOp |

Permits customers to export a BigQuery ML mannequin to a Google Cloud Storage bucket |

|

|

New Parts |

||

|

Forecasting |

BigqueryForecastModelJobOp |

Launches a BigQuery ML.FORECAST job and allows you to forecast an ARIMA_PLUS or ARIMA mannequin. |

|

BigqueryExplainForecastModelJobOp |

Launches a BigQuery ML.EXPLAIN_FORECAST job and allow you to forecast an ARIMA_PLUS or ARIMA mannequin |

|

|

BigqueryMLArimaEvaluateJobOp |

Launches a BigQuery ML.ARIMA_EVALUATE job and waits for it to complete. |

|

|

Anomaly |

BigqueryDetectAnomaliesModelJobOp |

Launches a BigQuery detect anomaly mannequin job and waits for it to complete. |

|

Mannequin Analysis |

BigqueryMLConfusionMatrixJobOp |

Launches a BigQuery confusion matrix job and waits for it to complete. |

|

BigqueryMLCentroidsJobOp |

Launches a BigQuery ML.CENTROIDS job and waits for it to complete |

|

|

BigqueryMLTrainingInfoJobOp |

Launches a BigQuery ml coaching data fetching job and waits for it to complete. |

|

|

BigqueryMLTrialInfoJobOp |

Launches a BigQuery ml trial data job and waits for it to complete. |

|

|

BigqueryMLRocCurveJobOp |

Launches a BigQuery roc curve job and waits for it to complete. |

|

|

Explainable AI |

BigqueryMLGlobalExplainJobOp |

Launches a BigQuery world clarify fetching job and waits for it to complete. |

|

BigqueryMLFeatureInfoJobOp |

Launches a BigQuery characteristic data job and waits for it to complete. |

|

|

BigqueryMLFeatureImportanceJobOp |

Launches a BigQuery characteristic significance fetch job and waits for it to complete. |

|

|

Mannequin Weights |

BigqueryMLWeightsJobOp |

Launches a BigQuery ml weights job and waits for it to complete. |

|

BigqueryMLAdvancedWeightsJobOp |

Launches a BigQuery ml superior weights job and waits for it to complete. |

|

|

BigqueryMLPrincipalComponentsJobOp |

Launches a BigQuery ML.PRINCIPAL_COMPONENTS job and waits for it to complete. |

|

|

BigqueryMLPrincipalComponentInfoJobOp |

Launches a BigQuery ML.principal_component_info job and waits for it to complete. |

|

|

BigqueryMLArimaCoefficientsJobOp |

Launches a BigQuery ML.ARIMA_COEFFICIENTS job and allows you to see the ARIMA coefficients. |

|

|

Mannequin Inference |

BigqueryMLReconstructionLossJobOp |

Launches a BigQuery ML reconstruction loss job and waits for it to complete. |

|

BigqueryExplainPredictModelJobOp |

Launches a BigQuery clarify predict mannequin job and waits for it to complete |

|

|

BigqueryMLRecommendJobOp |

Launches a BigQuery ML.Suggest job and waits for it to complete. |

|

|

Different |

BigqueryDropModelJobOp |

Launches a BigQuery drop mannequin job and waits for it to complete. |

Now that you’ve a broad overview of all pipeline operators for BQML accessible, let’s see how one can use forecasting ones within the end-to-end instance of constructing demand forecast predictions. You’ll discover the code within the Vertex AI samples repo on Github.

Instance of a requirement forecast predictions pipeline in BigQuery ML

On this part, we’ll present an end-to-end instance of utilizing BigQuery and BQML elements in a Vertex AI Pipeline for demand forecasting. The pipeline relies on the fixing for meals waste with information analytics weblog put up. On this situation, a fictitious grocer, FastFresh, specialised in promoting contemporary meals distribution, desires to reduce meals waste and optimize inventory ranges throughout all shops. Because of the frequency of stock updates (by minute of each single merchandise), they wish to practice a requirement forecasting mannequin on an hourly foundation. With 24 coaching jobs per day, they wish to automate mannequin coaching utilizing an ML pipeline utilizing pipeline operators for BQML ARIMA_PLUS, the forecasting mannequin kind in BQML.

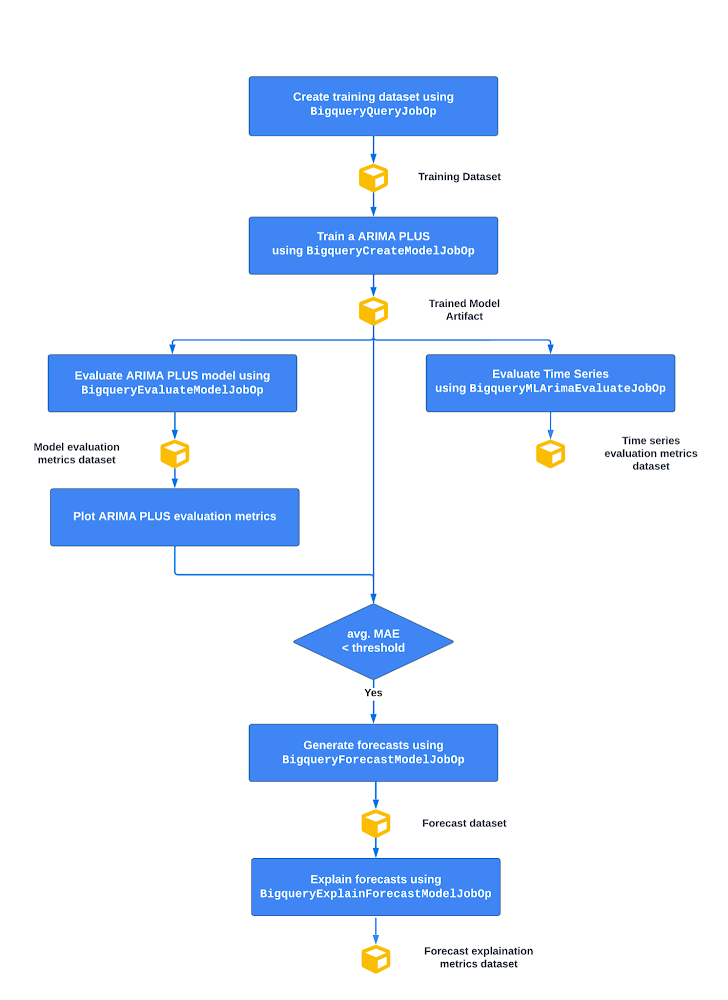

Beneath you’ll be able to see a excessive stage image of the pipeline circulate

From prime to backside:

-

Create the coaching dataset in BigQuery

-

Practice a BigQuery ML ARIMA_PLUS mannequin

-

Consider ARIMA_PLUS time collection and mannequin metrics

Then, if the typical imply absolute error (MAE), which measures the imply of absolutely the worth of the distinction between the forecasted worth and the precise worth, is lower than a sure threshold:

-

Generate time collection forecasts primarily based on a educated time collection ARIMA_PLUS mannequin

-

Generate separate time collection elements of each the coaching and the forecasting information to elucidate predictions

Let’s dive into the pipeline operators for BQML ARIMA_PLUS.

Coaching a requirement forecasting mannequin

After getting the coaching information (as a desk), you’re able to construct a requirement forecasting mannequin with the ARIMA_PLUS algorithm. You’ll be able to automate this BQML mannequin creation operation inside a Vertex AI Pipeline utilizing the BigqueryCreateModelJobOp. As we mentioned within the earlier article, this part permits you to cross the BQML coaching question to submit a mannequin coaching of an ARIMA_PLUS mannequin on BigQuery. The part returns a google.BQMLModel which shall be recorded within the Vertex ML Metadata so to hold observe of the lineage of all artifacts. Beneath you discover the mannequin coaching operator the place the set_display_name attribute permits you to identify the part in the course of the execution. And the after attribute permits you to management the sequential order of the pipeline step.

- code_block

- [StructValue([(u’code’, u’bq_arima_model_op = BigqueryCreateModelJobOp(rn query=f”””rn — create model tablern CREATE OR REPLACE MODEL `project.bq_dataset.bq_model_table`rn OPTIONS(rn MODEL_TYPE = ‘ARIMA_PLUS’,rn TIME_SERIES_TIMESTAMP_COL = ‘hourly_timestamp’,rn TIME_SERIES_DATA_COL = ‘total_sold’,rn TIME_SERIES_ID_COL = [‘product_name’],rn MODEL_REGISTRY = ‘vertex_ai’,rn VERTEX_AI_MODEL_ID = ‘order_demand_forecasting’,rn VERTEX_AI_MODEL_VERSION_ALIASES = [‘staging’]rn ) ASrn SELECTrn hourly_timestamp,rn product_name,rn total_soldrn FROM `venture.bq_dataset.`rn WHERE break up=’TRAIN’;rn “””,rn venture=venture,rn location=location,rn ).set_display_name(“practice arima plus mannequin”).after(create_training_dataset_op)’), (u’language’, u”), (u’caption’, <wagtail.wagtailcore.rich_text.RichText object at 0x3e42c0a09bd0>)])]

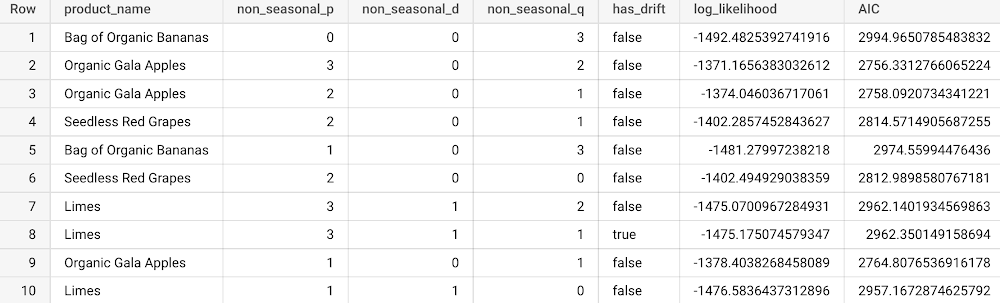

Evaluating time collection and mannequin metrics

When you practice the ARIMA_PLUS mannequin, you would wish to judge the mannequin earlier than producing predictions. In BigQuery ML, you’ve got ML.ARIMA_EVALUATE and ML.EVALUATE capabilities. The ML.ARIMA_EVALUATE perform generates each statistical metrics equivalent to log_likelihood, AIC, and variance and different time collection details about seasonality, vacation results, spikes-and-dips outliers, and so forth. for all of the ARIMA fashions educated with the automated hyperparameter tuning enabled by default (auto.ARIMA). The ML.EVALUATE retrieves forecasting accuracy metrics such because the imply absolute error (MAE) and the imply squared error (MSE). To combine these analysis capabilities in a Vertex AI pipeline now you can use the corresponding BigqueryMLArimaEvaluateJobOp and BigqueryEvaluateModelJobOp operators. In each instances they take google.BQMLModel as enter and return Analysis Metrics Artifact as output.

For the BigqueryMLArimaEvaluateJobOp, right here is an instance of it utilized in a pipeline part:

- code_block

- [StructValue([(u’code’, u’bq_arima_evaluate_time_series_op = BigqueryMLArimaEvaluateJobOp(rn project=project,rn location=location,rn model=bq_arima_model_op.outputs[‘model’],rn show_all_candidate_models=’false’,rnjob_configuration_query=bq_evaluate_time_series_configuration).set_display_name(“consider arima plus time collection”).after(bq_arima_model_op)’), (u’language’, u”), (u’caption’, <wagtail.wagtailcore.rich_text.RichText object at 0x3e42c3528110>)])]

Beneath is the view of statistical metrics (the primary 5 columns) ensuing from BigqueryMLArimaEvaluateJobOp operators in a BigQuery desk.

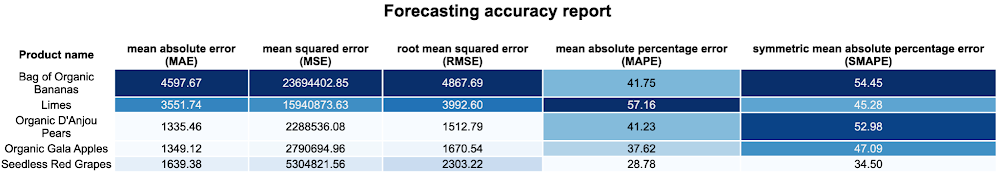

For the BigqueryEvaluateModelJobOp, under you’ve got the corresponding pipeline part:

- code_block

- [StructValue([(u’code’, u’bq_arima_evaluate_model_op = BigqueryEvaluateModelJobOp(rn project=project,rn location=location,rn model=bq_arima_model_op.outputs[‘model’],rn query_statement=f”SELECT * FROM `<my_project_id>.<my_dataset_id>.<train_table_id>` WHERE break up=’TEST'”,rn job_configuration_query=bq_evaluate_model_configuration).set_display_name(“consider arima_plus mannequin”).after(bq_arima_model_op)’), (u’language’, u”), (u’caption’, <wagtail.wagtailcore.rich_text.RichText object at 0x3e42c0a1b050>)])]

The place you’ve got a question assertion to pick out the check pattern to generate analysis forecast metrics.

As Analysis Metrics Artifacts within the Vertex ML metadata, you’ll be able to eat these metrics afterwards for visualizations within the Vertex AI Pipelines UI utilizing Kubeflow SDK visualization APIs. Certainly, Vertex AI permits you to render that HTML in an output web page which is well accessible from the Google Cloud console. Beneath is an instance of a customized forecasting HTML report you’ll be able to create.

Additionally you need to use these values to implement conditional if-else logic utilizing Kubeflow SDK situation within the pipeline graph. On this situation, a mannequin efficiency situation has been carried out utilizing the typical imply squared error in a manner that if the educated mannequin common imply squared error is under a sure threshold then the mannequin could be consumed to generate forecast predictions.

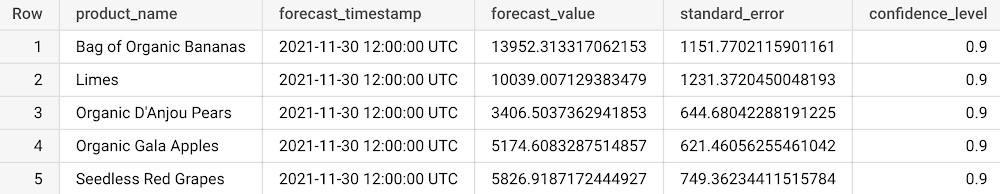

Generate and clarify demand forecasts

To generate forecasts within the subsequent n hours, you need to use the BigqueryForecastModelJobOp which launches a BigQuery forecast mannequin job. The part consumes the google.BQMLModel as Enter Artifact and permits you to set the variety of time factors to forecast (horizon) and the proportion of the long run values that fall within the prediction interval (confidence_level). Within the instance under it has been determined to generate hourly forecasts with a confidence interval of 90%.

- code_block

- [StructValue([(u’code’, u’bq_arima_forecast_op = BigqueryForecastModelJobOp(rn project=project,rn location=location,rn model=bq_arima_model_op.outputs[‘model’],rn horizon=1,rn confidence_level=zero.9,rn job_configuration_query=bq_forecast_configuration).set_display_name(“generate hourly forecasts”).after(get_evaluation_model_metrics_op’), (u’language’, u”), (u’caption’, <wagtail.wagtailcore.rich_text.RichText object at 0x3e42c16e3290>)])]

Then forecasts are materialized in a predefined vacation spot desk utilizing the job_configuration_query parameter which shall be tracked as google.BQTable within the Vertex ML Metadata. Beneath is an instance of the forecast desk you’ll get (solely the 5 columns).

After you generate your forecasts, you may as well clarify them utilizing the BigqueryExplainForecastModelJobOp which extends the capabilities of BigqueryForecastModelJobOp operator and permits to make use of the ML.EXPLAIN_FORECAST perform which offers additional mannequin explainability like development, detected seasonality, and vacation results.

- code_block

- [StructValue([(u’code’, u’bq_arima_explain_forecast_op = BigqueryExplainForecastModelJobOp(rn project=project,rn location=location,rn model=bq_arima_model_op.outputs[‘model’],rn horizon=1,rn confidence_level=zero.9,rnjob_configuration_query=bq_explain_forecast_configuration).set_display_name(“clarify hourly forecasts”).after(bq_arima_forecast_op)’), (u’language’, u”), (u’caption’, <wagtail.wagtailcore.rich_text.RichText object at 0x3e42a9f25d50>)])]

On the finish, right here you will note the visualization of the general pipeline you outline within the Vertex AI Pipelines UI.

And if you wish to analyze, debug, and audit ML pipeline artifacts and their lineage, you’ll be able to entry the next illustration within the Vertex ML Metadata by clicking on one of many yellow artifact objects rendered by the Google Cloud console.

Conclusion

On this blogpost, we described the brand new BigQuery and BigQuery ML (BQML) elements now accessible for Vertex AI Pipelines, enabling information scientists and ML engineers to orchestrate and automate any BigQuery and BigQuery ML capabilities. We additionally confirmed an end-to-end instance of utilizing the elements for demand forecasting involving BigQuery ML and Vertex AI Pipelines.

What’s subsequent

Are you prepared for operating your BQML pipeline with Vertex AI Pipelines? Try the next assets and provides it a attempt:

-

Documentation

-

BigQuery ML

-

Vertex AI Pipelines

-

BigQuery and BigQuery elements for Vertex AI Pipelines

-

Code Labs

-

Intro to Vertex Pipelines

-

Utilizing Vertex ML Metadata with Pipelines

-

Vertex AI Samples: Github repository

-

Video Sequence: AI Simplified: Vertex AI

-

Fast Lab: Construct and Deploy Machine Studying Options on Vertex AI

References

-

https://cloud.google.com/weblog/merchandise/data-analytics/solving-for-food-waste-with-data-analytics-in-google-cloud

-

https://cloud.google.com/structure/build-visualize-demand-forecast-prediction-datastream-dataflow-bigqueryml-looker

-

https://cloud.google.com/weblog/subjects/developers-practitioners/announcing-bigquery-and-bigquery-ml-operators-vertex-ai-pipelines

[ad_2]

Source link