[ad_1]

|

In the present day, we’re happy to announce Amazon SageMaker Coaching Compiler, a brand new Amazon SageMaker functionality that may speed up the coaching of deep studying (DL) fashions by as much as 50%.

In the present day, we’re happy to announce Amazon SageMaker Coaching Compiler, a brand new Amazon SageMaker functionality that may speed up the coaching of deep studying (DL) fashions by as much as 50%.

As DL fashions develop in complexity, so too does the time it could possibly take to optimize and prepare them. For instance, it could possibly take 25,000 GPU-hours to coach well-liked pure language processing (NLP) mannequin “RoBERTa“. Though there are methods and optimizations that clients can apply to cut back the time it could possibly take to coach a mannequin, these additionally take time to implement and require a uncommon skillset. This could impede innovation and progress within the wider adoption of synthetic intelligence (AI).

How has this been completed so far?

Sometimes, there are 3 ways to hurry up coaching:

- Utilizing extra highly effective, particular person machines to course of the calculations

- Distributing compute throughout a cluster of GPU cases to coach the mannequin in parallel

- Optimizing mannequin code to run extra effectively on GPUs by using much less reminiscence and compute.

In observe, optimizing machine studying (ML) code is troublesome, time-consuming, and a uncommon talent set to amass. Information scientists sometimes write their coaching code in a Python-based ML framework, corresponding to TensorFlow or PyTorch, counting on ML frameworks to transform their Python code into mathematical capabilities that may run on GPUs, generally often known as kernels. Nonetheless, this translation from the Python code of a consumer is usually inefficient as a result of ML frameworks use pre-built, generic GPU kernels, as a substitute of making kernels particular to the code and mannequin of the consumer.

It may possibly take even essentially the most expert GPU programmers months to create customized kernels for every new mannequin and optimize them. We constructed SageMaker Coaching Compiler to resolve this downside.

In the present day’s launch lets SageMaker Coaching Compiler routinely compile your Python coaching code and generate GPU kernels particularly to your mannequin. Consequently, the coaching code will use much less reminiscence and compute, and due to this fact prepare quicker. For instance, when fine-tuning Hugging Face’s GPT-2 mannequin, SageMaker Coaching Compiler lowered coaching time from practically three hours to 90 minutes.

Robotically Optimizing Deep Studying Fashions

So, how have we achieved this acceleration? SageMaker Coaching Compiler accelerates coaching jobs by changing DL fashions from their high-level language illustration to hardware-optimized directions that prepare quicker than jobs with off-the-shelf frameworks. Below the hood, SageMaker Coaching Compiler makes incremental optimizations past what the native PyTorch and TensorFlow frameworks supply to maximise compute and reminiscence utilization on SageMaker GPU cases.

Extra particularly, SageMaker Coaching Compiler makes use of graph-level optimization (operator fusion, reminiscence planning, and algebraic simplification), knowledge flow-level optimizations (structure transformation, frequent sub-expression elimination), and back-end optimizations (reminiscence latency hiding, loop oriented optimizations) to supply an optimized mannequin that effectively makes use of hardware assets. Because of this, coaching is accelerated by as much as 50%, and the returned mannequin is similar as if SageMaker Coaching Compiler had not been used.

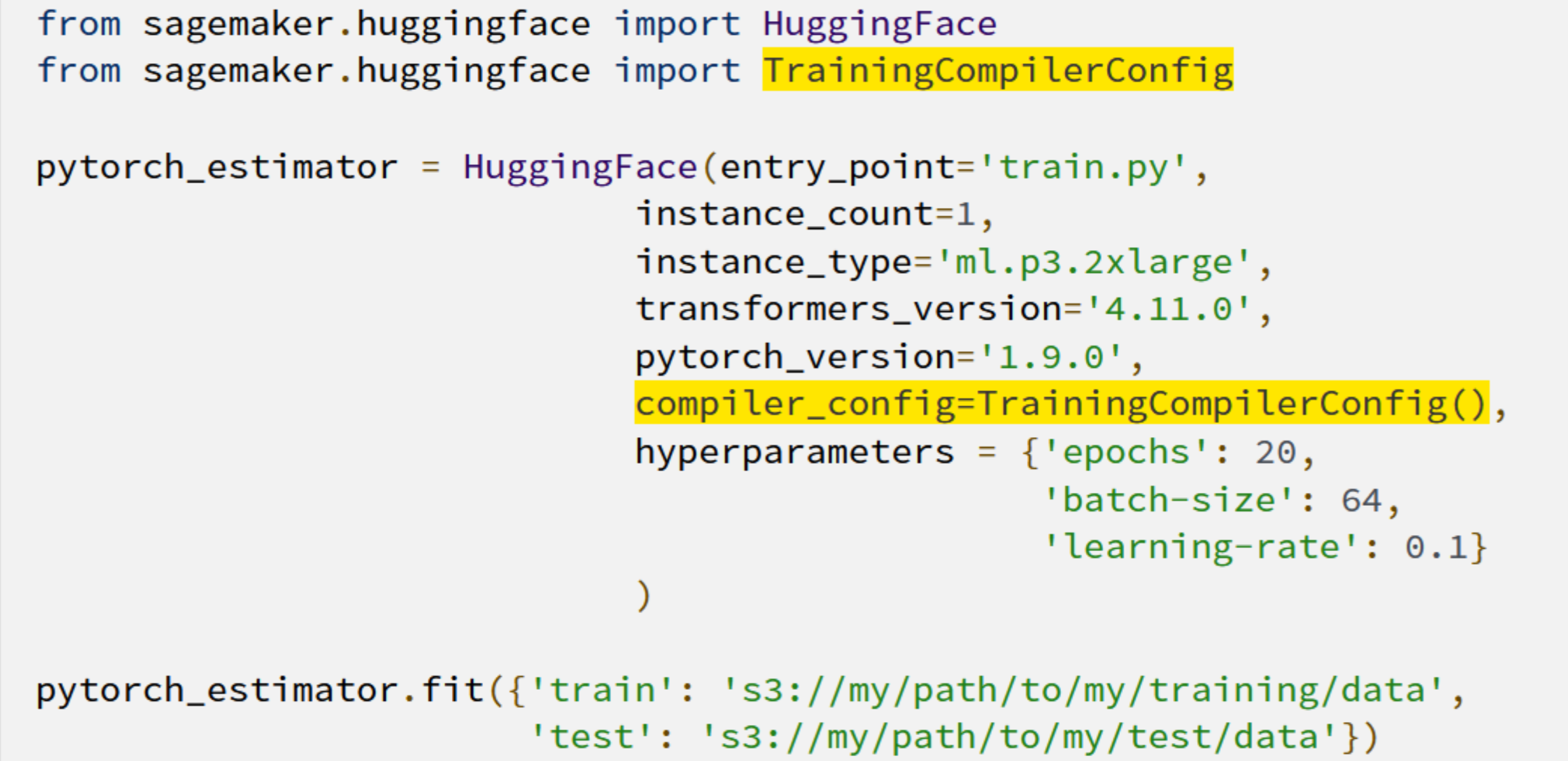

However how do you utilize SageMaker Coaching Compiler along with your fashions? It may be so simple as including two strains of code!

The shortened coaching occasions imply that clients achieve extra time for innovating and deploying their newly-trained fashions at a lowered price and a higher potential to experiment with bigger fashions and extra knowledge.

Getting essentially the most from SageMaker Coaching Compiler

Though many DL fashions can profit from SageMaker Coaching Compiler, bigger fashions with longer coaching will understand the best time and value financial savings. For instance, coaching time and prices fell by 30% on a long-running RoBERTa-base fine-tuning train.

Jorge Lopez Grisman, a Senior Information Scientist at Quantum Well being – a company on a mission to “make healthcare navigation smarter, less complicated, and more cost effective for everybody” – stated:

“Iterating with NLP fashions generally is a problem due to their measurement: lengthy coaching occasions bathroom down workflows and excessive prices can discourage our workforce from attempting bigger fashions that may supply higher efficiency. Amazon SageMaker Coaching Compiler is thrilling as a result of it has the potential to alleviate these frictions. Reaching a speedup with SageMaker Coaching Compiler is an actual win for our workforce that can make us extra agile and modern transferring ahead.”

Additional Sources

To study extra about how Amazon SageMaker Coaching Compiler can profit you, you possibly can go to our web page right here. And to get began see our technical documentation right here.

[ad_2]

Source link