[ad_1]

Google Cloud Billing permits Billing Account Directors to configure the export of Google Cloud billing knowledge to a BigQuery dataset for evaluation and intercompany billback eventualities. Builders could select to extract Google Cloud VMware Engine (GCVE) configuration and utilization knowledge and apply inner value and pricing knowledge to create customized experiences to assist GCVE useful resource billback eventualities. Utilizing VMware PowerCLI, a set of modules for Home windows PowerShell, knowledge is extracted and loaded into Massive Question. As soon as the information is loaded into Massive Question, analysts could select to create billing experiences and dashboards utilizing Looker or Google Sheets.

Exporting knowledge from Google Cloud billing knowledge right into a BigQuery dataset is comparatively straight-forward. Nevertheless, exporting knowledge from GCVE into BigQuery requires PowerShell scripting. The next weblog particulars steps to extract knowledge from GCVE and cargo it into BigQuery for reporting and evaluation.

Preliminary Setup VMware PowerCLI Set up

Putting in and configuring VMware PowerCLI is a comparatively fast course of, with the first dependency being community connectivity between the host used to develop and run the PowerShell script and the GCVE Personal Cloud.

-

[Option A] Provision a Google Compute Engine occasion, for instance Home windows Server 2019, to be used as a growth server.

[Option B] Alternatively, use a Home windows laptop computer with Powershell three.zero or larger put in -

Launch the Powershell ISE as Administrator. Set up and configure the VMware PowerCLI, any required dependencies and carry out a connection check.

Reference: https://www.powershellgallery.com/packages/VMware.PowerCLI/12.three.zero.17860403

VMware PowerCLI Growth

Subsequent, develop a script to extract and cargo knowledge into BigQuery. Word that this requires a developer to have permissions to create a BigQuery dataset, create tables and insert knowledge. An instance course of together with code samples follows.

1. Import the PowerCLI Module and hook up with the GVCE cluster.

2. [Optional] If desired, a vCenter simulator docker container, nimmis/vcsim : vCenter and ESi API primarily based simulator, could also be helpful for growth functions. For info on organising a vCenter simulator, see the next hyperlink: https://www.altaro.com/vmware/powercli-scripting-vcsim/

three. Create a dataset to carry knowledge tables in BigQuery. This dataset could maintain a number of tables.

four. Create an inventory of vCenters you want to acquire knowledge from.

5. Create a file identify variable.

6. For a easy VM stock, extract knowledge utilizing the Get-VM cmdlet. You may additionally select to extract knowledge utilizing different cmdlets, for instance, Get-VMHost and Get-DataStore. Assessment vSphere developer documentation for extra info on out there cmdlets together with particular examples.

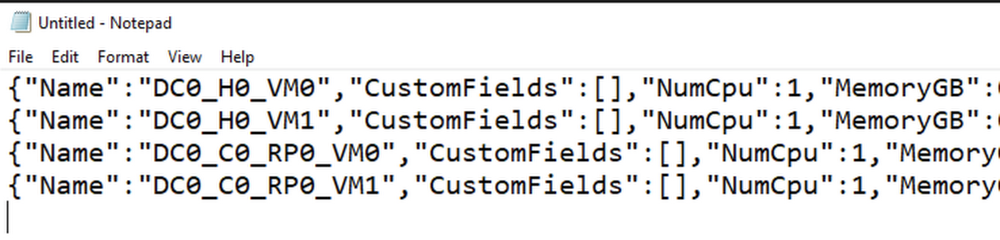

7. View/Validate the json knowledge as required.

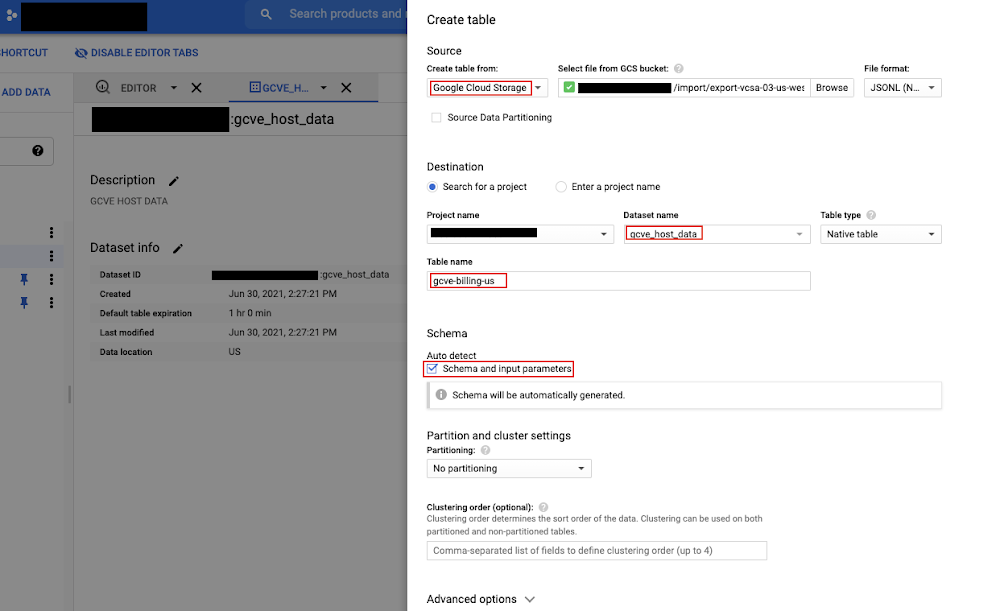

eight. Create a desk in BigQuery. Word that this solely must be accomplished as soon as. Within the instance under the .json file was first loaded right into a Cloud Storage bucket. The desk was then created from the file within the bucket.

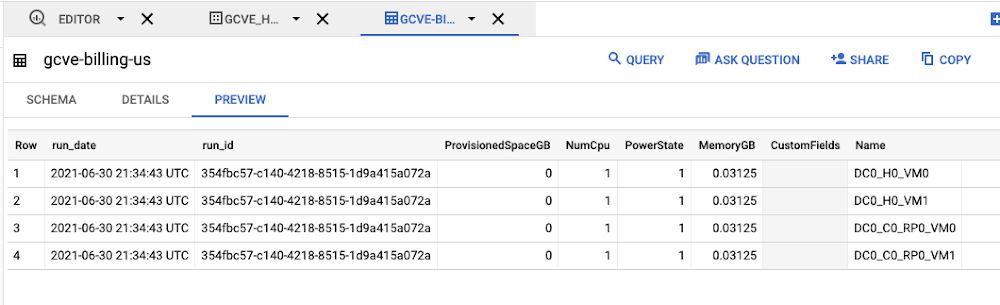

9. Load the file into Massive Question.

10. Disconnect from a server.

11. Think about scheduling the script you developed utilizing Home windows Process Scheduler, cron or one other scheduling software in order that it runs on the required schedule.

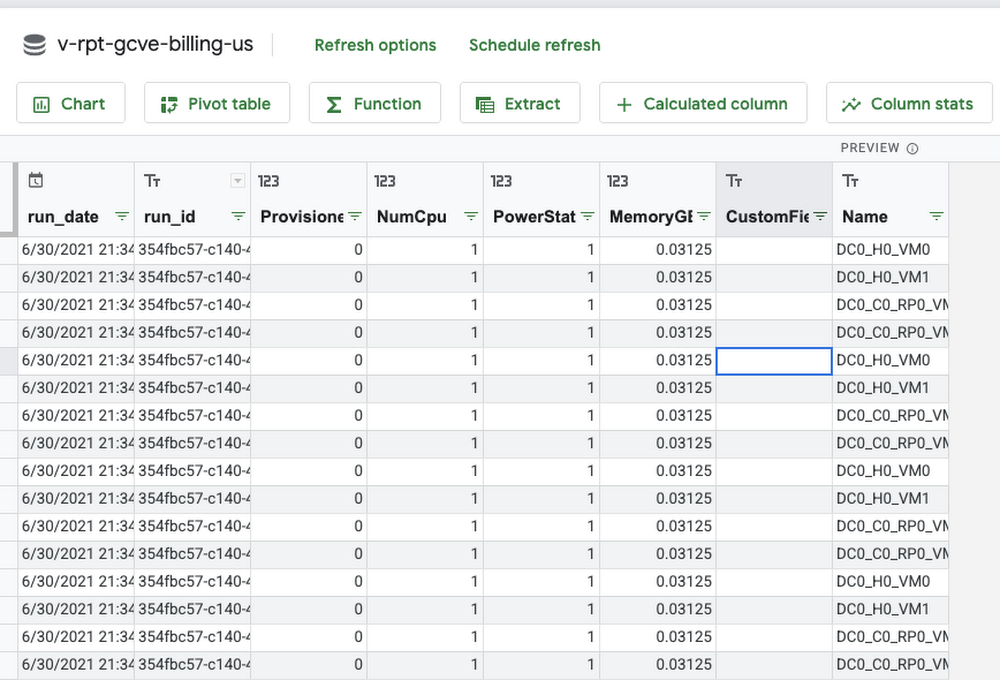

12. Utilizing the BigQuery UI or Google Information Studio, create views and queries referencing the staging tables to extract and remodel knowledge for reporting and evaluation functions. It’s a good suggestion to create any supporting tables in BigQuery to assist value evaluation comparable to a date dimension desk, pricing schedule desk and different related lookup tables to assist allocations and departmental invoice again eventualities. Connect with Massive Question utilizing Looker to create experiences and dashboards.

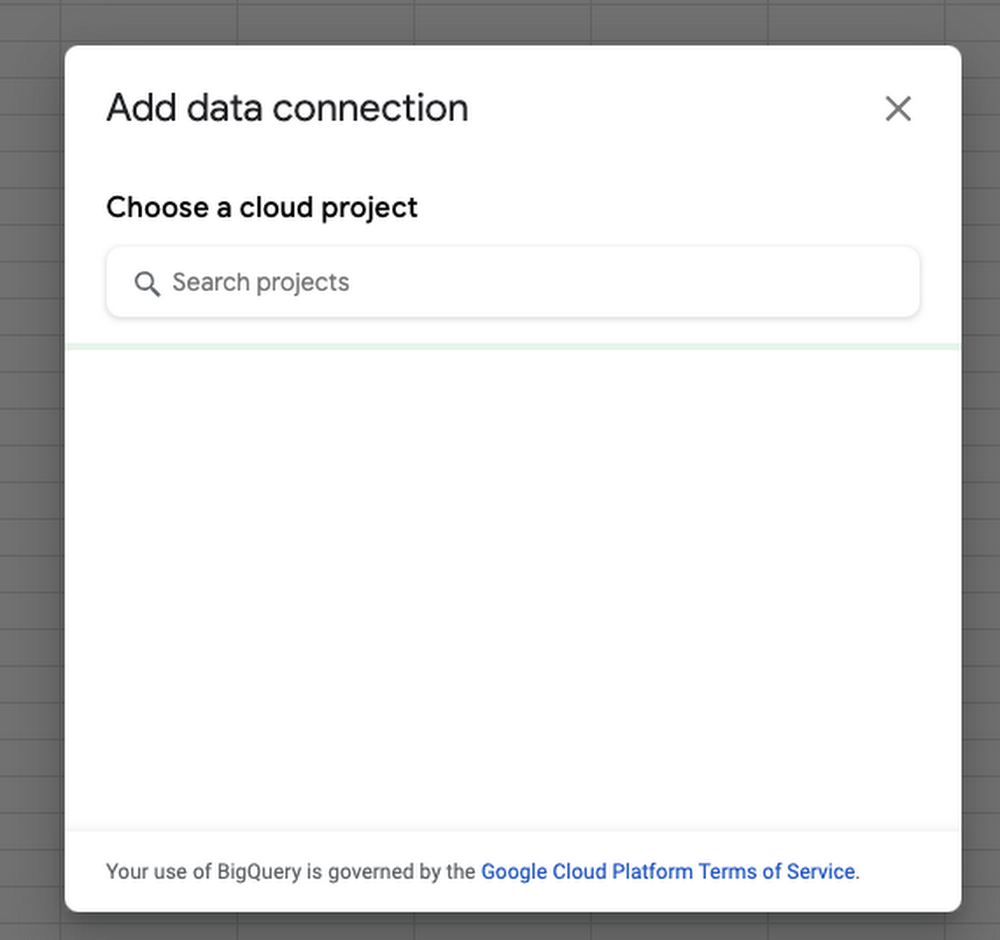

Instance: Connect with BigQuery from Google Sheets and Import knowledge.

Utilizing Customized Tags

Customized tags permit a GCVE administrator to affiliate a VM with a particular service or utility and are helpful for invoice again and price allocation. For instance, vm’s which have a customized tag populated with a service identify (ex. x-callcenter) will be grouped collectively to calculate direct prices required to ship a service. Leap containers or shared vm’s could also be tagged accordingly and grouped to assist shared service and oblique value allocations. Customized tags mixed with key metrics comparable to provisioned, utilized and out there capability allow GCVE directors to optimize infrastructure and assist budgeting and accounting necessities.

Serverless Billing Exports scheduled with Cloud Scheduler

Along with working powershell code as a scheduled activity, chances are you’ll select to host your script in a container and allow script execution utilizing an internet service. One potential resolution may look one thing like this:

-

Create a Docker File working ubuntu 18:04. Set up Python3, Powershell 7 and VmWare Energy CLI

-

Instance necessities.txt

Instance Docker File:

2. On your major.py script, use subprocess to run your powershell script. Push your container to Container Registry , deploy your container and schedule ELT utilizing Cloud Scheduler.

[ad_2]

Source link