[ad_1]

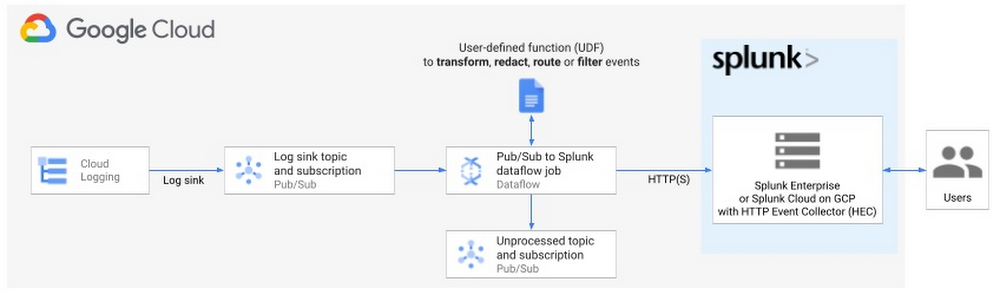

Final 12 months, we launched the Pub/Sub to Splunk Dataflow template to assist clients simply & reliably export their high-volume Google Cloud logs and occasions into their Splunk Enterprise atmosphere or their Splunk Cloud on Google Cloud (now in Google Cloud Market). Since launch, we now have seen nice adoption throughout each enterprises and digital natives utilizing the Pub/Sub to Splunk Dataflow to get insights from their Google Cloud knowledge.

Pub/Sub to Splunk Dataflow template used to export Google Cloud logs into Splunk HTTP Occasion Collector (HEC)

We’ve been working with lots of the customers to establish and add new capabilities that not solely addresses a few of the suggestions but additionally reduces the trouble to combine and customise the Splunk Dataflow template.

Right here’s the checklist of characteristic updates that are coated in additional element under:

- Computerized logs parsing with improved compatibility with Splunk Add-on for GCP

- Extra extensibility with user-defined capabilities (UDFs) for customized transforms

- Reliability and fault tolerance enhancements

The theme behind these updates is to speed up time to worth (TTV) for purchasers by lowering each operational complexity on the Dataflow aspect and knowledge wrangling (aka data administration) on the Splunk aspect.

We’ve a dependable deployment and testing framework in place and confidence that it [Splunk Dataflow pipeline] will scale to our demand. It’s in all probability the most effective form of infrastructure, the one I don’t have to fret about.

We need to assist companies spend much less time on managing infrastructure and integrations with third-party functions, and as an alternative get worth and time-sensitive insights from their Google Cloud knowledge, be it for enterprise analytics, IT or safety operations. “We’ve a dependable deployment and testing framework in place and confidence that it’s going to scale to our demand. It’s in all probability the most effective form of infrastructure, the one I don’t have to fret about.” says the lead Cloud Safety Engineer of a significant Life Sciences firm that leverages Splunk Dataflow pipelines to export multi-TBs of logs per day to energy their essential safety operations.

To benefit from all these options, make certain to replace to the most recent Splunk Dataflow template (gs://dataflow-templates/newest/Cloud_PubSub_to_Splunk), or, on the time of this writing, model 2021-08-02-00_RC00 (gs://dataflow-templates/2021-08-02-00_RC00/Cloud_PubSub_to_Splunk) or newer.

Extra compatibility with Splunk

“All we now have to determine is what to do with the time that’s given us.”

— Gandalf

For Splunk directors and customers, you now have extra time to spend analyzing knowledge, as an alternative of parsing and extracting logs & fields.

Splunk Add-on for Google Cloud Platform

By default, Pub/Sub to Splunk Dataflow template forwards solely the Pub/Sub message physique, versus all the Pub/Sub message and its attributes. You may change that conduct by setting template parameter includePubsubMessage to true, to incorporate all the Pub/Sub message as anticipated by Splunk Add-on for GCP.

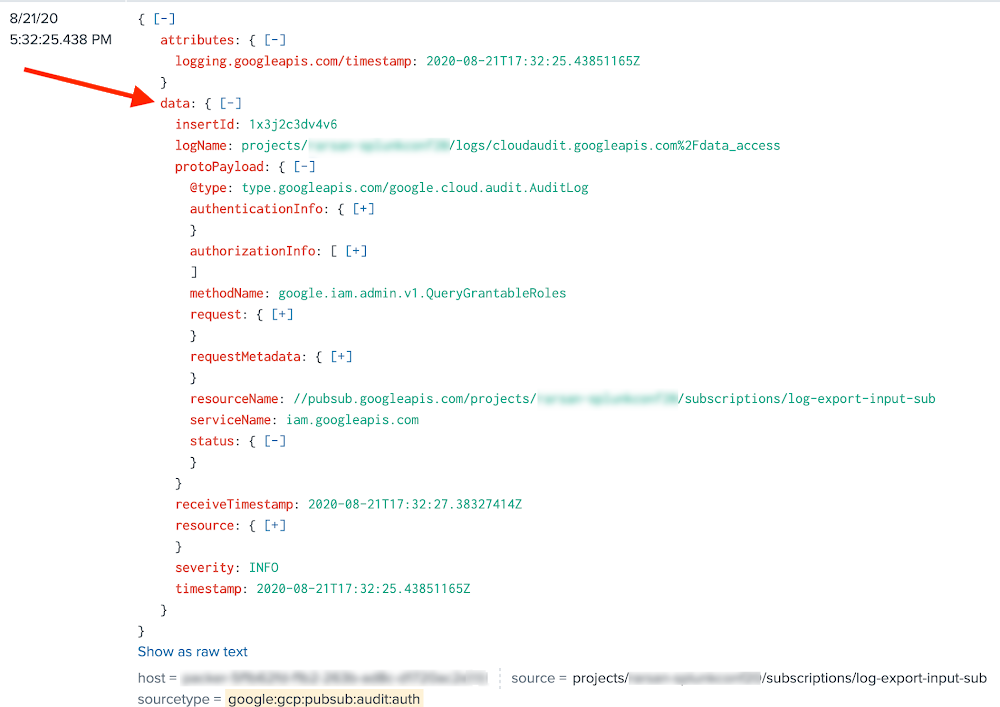

Nonetheless, in prior variations of Splunk Dataflow template, within the case of includePubsubMessage=true, Pub/Sub message physique was stringified and nested underneath the sphere message, whereas Splunk Add-on anticipated a JSON object nested underneath knowledge subject.

Message physique stringified previous to Splunk Dataflow model 2021-05-03-00_RC00

This led clients to both customise their Splunk Add-on configurations (through props & transforms) to parse the payload, or use spath to explicitly parse JSON, and subsequently keep two flavors of Splunk searches (through macros) relying on whether or not knowledge was pushed by Dataflow or pulled by Add-on…removed from very best expertise. That’s not obligatory as Splunk Dataflow template now serializes messages in a way appropriate with Splunk Add-on for GCP. In different phrases, the default JSON parsing, built-in Add-on fields extractions and knowledge normalization work out-of-the-box:

Message physique as JSON payload as of Splunk Dataflow model 2021-05-03-00_RC00

Clients can readily benefit from all of the sourcetypes in Splunk Add-on together with Widespread Data Mannequin (CIM) compliance. That additionally ensures full compatibility with premium functions like Splunk Enterprise Safety (ES) and IT Service Intelligence (ITSI) with none additional effort on the shopper, so long as they’ve set includePubsubMessage=true of their Splunk Dataflow pipelines.

Word on updating present pipelines with includePubsubMessage:

If you happen to’re updating your pipelines from includePubsubMessage=false to includePubsubMessage=true and you’re utilizing a UDF operate, make certain to replace your UDF implementation for the reason that operate’s enter argument is now the PubSub message wrapper moderately that the underlying message physique, that’s the nested knowledge subject. In your operate, assuming you save the JSON-parsed model of the enter argument in obj variable, the physique payload is now nested in obj.knowledge. For instance, in case your UDF is processing a log entry from Cloud Logging, a reference to obj.protoPayload must be up to date to obj.knowledge.protoPayload.

Splunk HTTP Occasion Collector

We additionally heard from clients who needed to make use of Splunk HEC ‘fields’ metadata to set customized index-time subject extractions in Splunk. We’ve subsequently added assist for that final remaining Splunk HEC metadata subject. Clients can now simply set these index-time subject extractions on the sender aspect (Dataflow pipeline) moderately than configuring non-trivial props & transforms on the receiver (Splunk indexer or heavy forwarder). A standard use case is to index metadata fields from Cloud Logging, particularly useful resource labels similar to project_id and instance_id to speed up Splunk searches and correlations based mostly on distinctive Venture IDs and Occasion IDs. See instance 2.2 underneath ‘Sample 2: Remodel occasions’ in our weblog about getting began with Dataflow UDFs for a pattern UDF on methods to set HEC fields metadata utilizing useful resource.labels object.

Extra extensibility with utility UDFs

“I don’t know, and I’d moderately not guess.”

— Frodo

For Splunk Dataflow customers who need to tweak the pipeline’s output format, you are able to do so with out understanding Dataflow or Apache Beam programming, and even having a developer atmosphere setup. You would possibly need to enrich occasions with further metadata fields, redact some delicate fields, filter undesired occasions, or set Splunk metadata similar to vacation spot index to route occasions to.

When deploying the pipeline, you possibly can reference a Consumer-Outlined Operate (UDF), that could be a small snippet of JavaScript code, to rework the occasions in-flight. The benefit is that you simply configure such UDF as a template parameter, with out altering, re-compiling or sustaining the Dataflow template code itself. In different phrases, UDFs supply a easy hook to customise the information format whereas abstracting low-level template particulars.

With regards to writing a UDF, you possibly can eradicate guesswork by beginning with one of many utility UDFs listed in Lengthen your Dataflow template with UDFs. That article contains additionally a sensible information on testing and deploying UDFs.

Extra reliability and error dealing with

“The board is ready, the items are transferring. We come to it eventually, the good battle of our time.”

— Gandalf

Final however not least, the most recent Dataflow template improves pipeline fault tolerance and supplies a simplified Dataflow operator troubleshooting expertise.

Specifically, Splunk Dataflow template’s retry functionality (with exponential backoff) has been prolonged to cowl transient community failures (e.g. Connection timeout), along with transient Splunk server errors (e.g. Server is busy). Beforehand, the pipeline would instantly drop these occasions within the dead-letter matter. Whereas this avoids knowledge loss, it additionally provides pointless burden for operator who’re accountable to replay these undelivered messages. The brand new Splunk Dataflow template minimizes this overhead by making an attempt retries each time attainable, and solely dropping messages to dead-letter matter when it’s a persistent challenge like these listed in Supply error sorts, or when the utmost retry elapsed time has expired (15 min).

Lastly, as extra clients undertake UDFs to customise the conduct of their pipelines per earlier part, we’ve invested in higher logging for UDF-based errors similar to JavaScript syntax errors. Beforehand, you could possibly solely troubleshoot these errors by inspecting the undelivered messages within the dead-letter matter. Now you can view these errors within the employee logs straight from the Dataflow job web page in Cloud Console. Right here’s an instance question you should utilize in Logs Explorer:

You may as well arrange an alert coverage in Cloud Monitoring to provide you with a warning each time such UDF error occurs so you possibly can assessment the message payload within the dead-letter matter for additional inspection. You may then both tweak the supply log sink (if relevant) to filter out these surprising logs, or revise your UDF operate logic to correctly deal with these logs.

What’s subsequent?

“House is behind, the world forward, and there are a lot of paths to tread by shadows to the sting of evening, till the celebs are all alight.”

— J. R. R. Tolkien

We hope this offers you a great overview of latest Splunk Dataflow enhancements. Our objective is to reduce your operational overhead for logs aggregation & export, so you possibly can concentrate on getting real-time insights out of your worthwhile logs.

To get began, try our detailed reference information on deploying production-ready log exports to Splunk utilizing Dataflow. Benefit from the related Splunk Dataflow Terraform module to automate deployment of your log export, in addition to these pattern UDF capabilities to customise log transformation in-flight earlier than supply to Splunk, as wanted.

Remember to hold a watch out for extra Splunk Dataflow enhancements in our GitHub repo for Dataflow Templates. Each characteristic coated above is customer-driven, so please proceed to submit any characteristic requests as GitHub repo points, or straight out of your Cloud Console as assist circumstances.

Acknowledgements

Particular because of Matt Hite from Splunk each for his shut collaboration in product co-development, and for his partnership in serving joint clients.

[ad_2]

Source link