[ad_1]

Good artists copy, nice artists steal, and good software program builders use different individuals’s machine studying fashions.

In case you’ve skilled ML fashions earlier than, you understand that one of the crucial time-consuming and cumbersome components of the method is amassing and curating knowledge to coach these fashions. However for plenty of issues, you may skip that step by as an alternative utilizing anyone else’s mannequin that’s already been skilled to do what you want–like detect spam, convert speech to textual content, or label objects in photographs. All the higher if that mannequin is constructed and maintained by people with entry to huge datasets, highly effective coaching rigs, and machine studying experience.

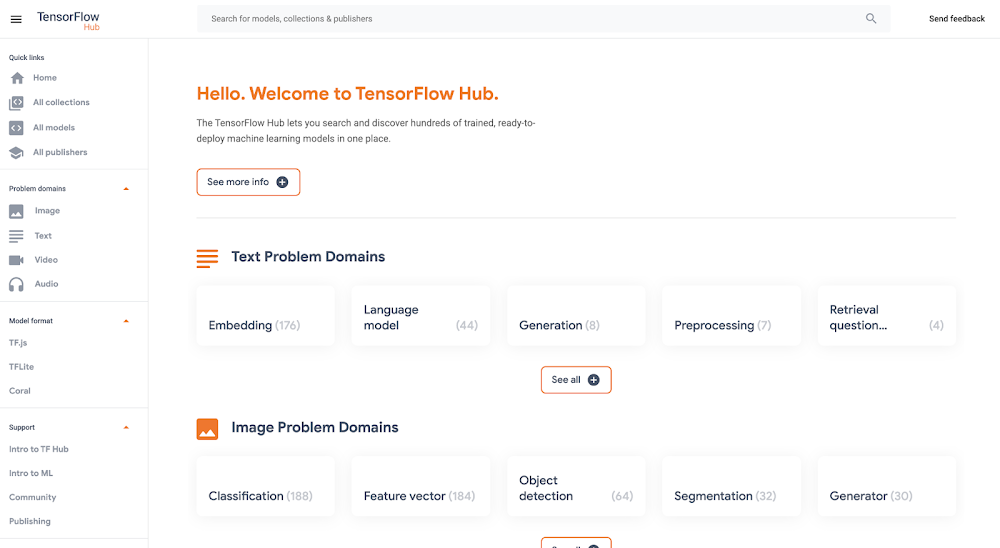

One excellent place to seek out a lot of these “pre-trained” fashions is TensorFlow Hub, which hosts tons of state-of-the-art fashions constructed by Google Analysis you can obtain and use totally free. Right here you’ll discover fashions for doing duties like picture segmentation, tremendous decision, query answering, textual content embedding, and a complete lot extra. You don’t want a coaching knowledge set to make use of these fashions, which is sweet information, since a few of them are enormous and skilled on huge datasets. However if you wish to use certainly one of these huge fashions in your app, the problem then turns into the place to host them (within the cloud, almost certainly) in order that they’re quick, dependable, and scalable.

For this, Google’s new Vertex AI platform is simply the ticket. On this put up, we’ll obtain a mannequin from TensorFlow Hub and add it to Vertex’s prediction service, which is able to host our mannequin within the cloud and allow us to make predictions with it by a REST endpoint. It’s a serverless strategy to serve machine studying fashions. Not solely does this make app improvement simpler, nevertheless it additionally lets us benefit from hardware like GPUs and mannequin monitoring options constructed into Vertex. Let’s get to it.

Want doing all the pieces in code from a Jupyter pocket book? Take a look at this colab.

Obtain a mannequin from TensorFlow Hub

On https://tfhub.dev/, you’ll discover plenty of free fashions that course of audio, textual content, video, and pictures. On this put up, we’ll seize one of the crucial well-liked Hub fashions, the Common Sentence Encoder. This mannequin takes as enter a sentence or paragraph and returns a vector or “embedding” that maps the textual content to factors in house. These embeddings can then be used for all the pieces from sentence similarity to good search to constructing chatbots (learn extra about them right here).

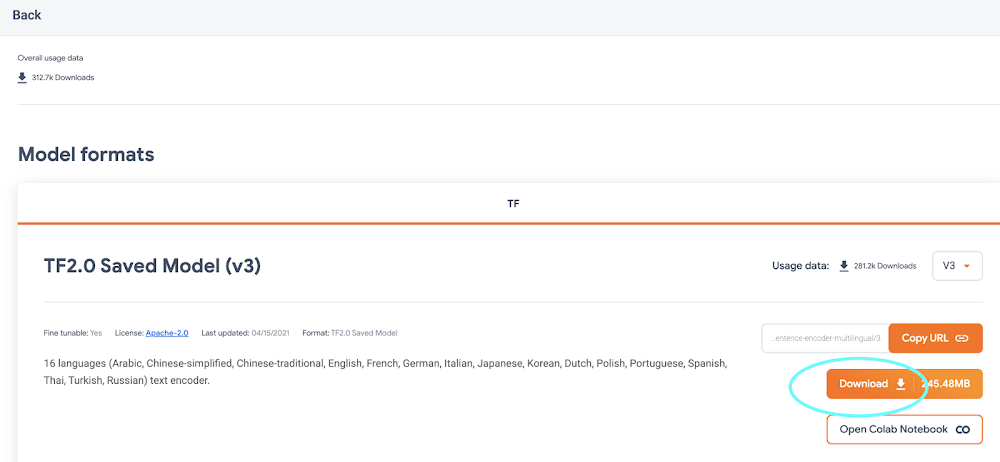

On the Common Sentence Encoder web page, click on “Obtain” to seize the mannequin in TensorFlow’s SavedModel format. You’ll obtain a zipped file that incorporates a listing formatted like so:

-universal-sentence-encoder_4

-assets

-saved_model.pb

-variables

– variables.data-00000-of-00001

– variables.index

Right here, the saved_model.pb file describes the construction of the saved neural community, and the information within the variables folder incorporates the community’s discovered weights.

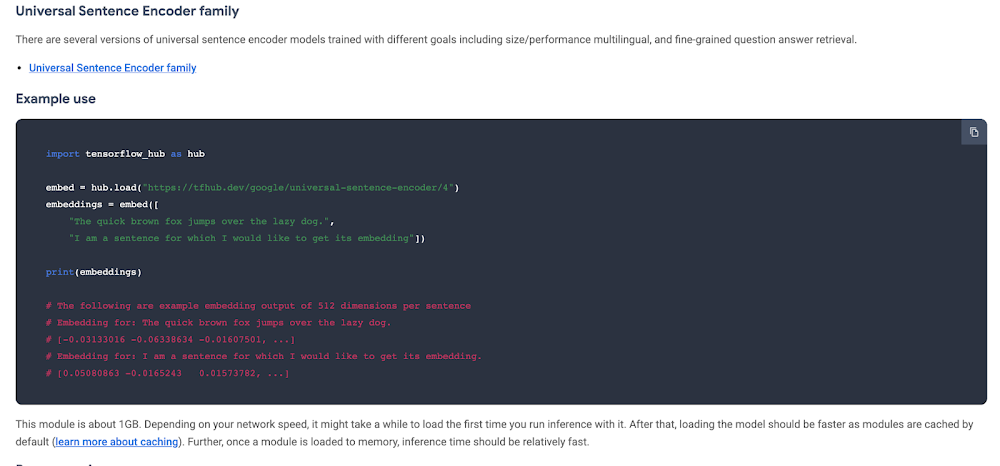

On the mannequin’s hub web page, you may see it’s instance utilization:

You feed the mannequin an array of sentences and it spits out an array of vectors.

With out this instance, we will nonetheless find out about what enter and output the mannequin helps by utilizing TensorFlow’s SavedModel CLI. In case you’ve received TensorFlow put in in your laptop, within the listing of the Hub mannequin you downloaded, run:

For this mannequin, that command outputs:

From this, we all know that our mannequin expects as enter a one-dimensional array of Strings. We’ll use this in a second.

Getting began with Vertex AI

Vertex AI is a brand new platform for coaching, deploying, and monitoring machine studying fashions launched this yr at Google I/O.

For this mission, we’ll simply use the prediction service, which is able to wrap our mannequin in a handy REST endpoint.

To get began, you’ll want a Google Cloud account with a GCP mission arrange. Subsequent, you’ll must create a Cloud Storage bucket, which is the place you’ll add the TensorFlow Hub mannequin. You are able to do this from the command line utilizing gsutil:

If this mannequin is huge, this might take some time!

Within the facet menu, allow the Vertex AI API.

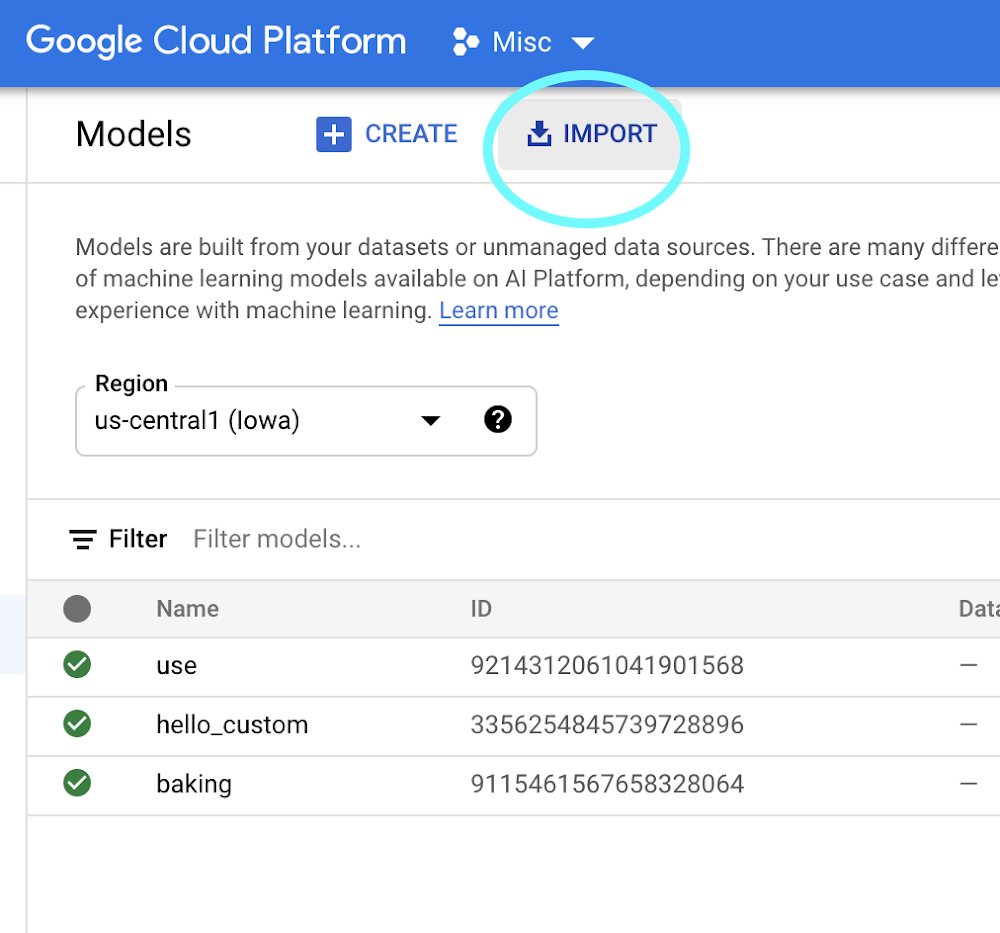

As soon as your Hub mannequin is uploaded to Cloud Storage, it’s easy to import it into Vertex AI following the docs or this fast abstract:

-

On the Vertex AI “Fashions” tab, click on import:

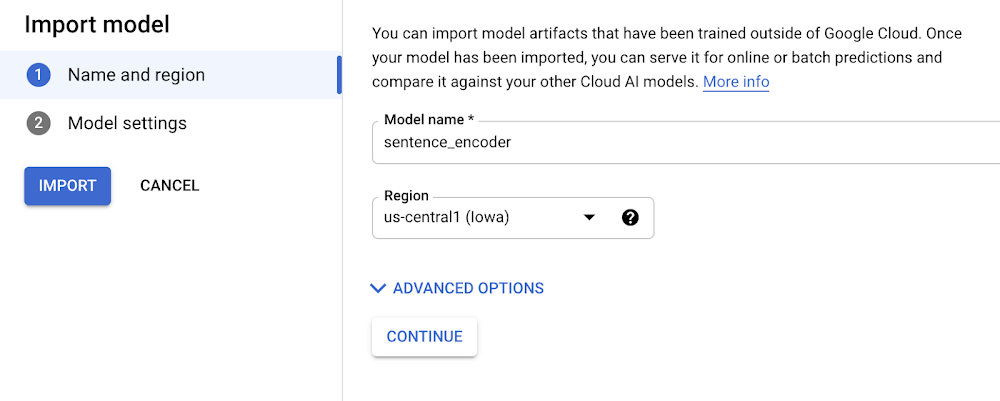

2. Select any identify to your mannequin:

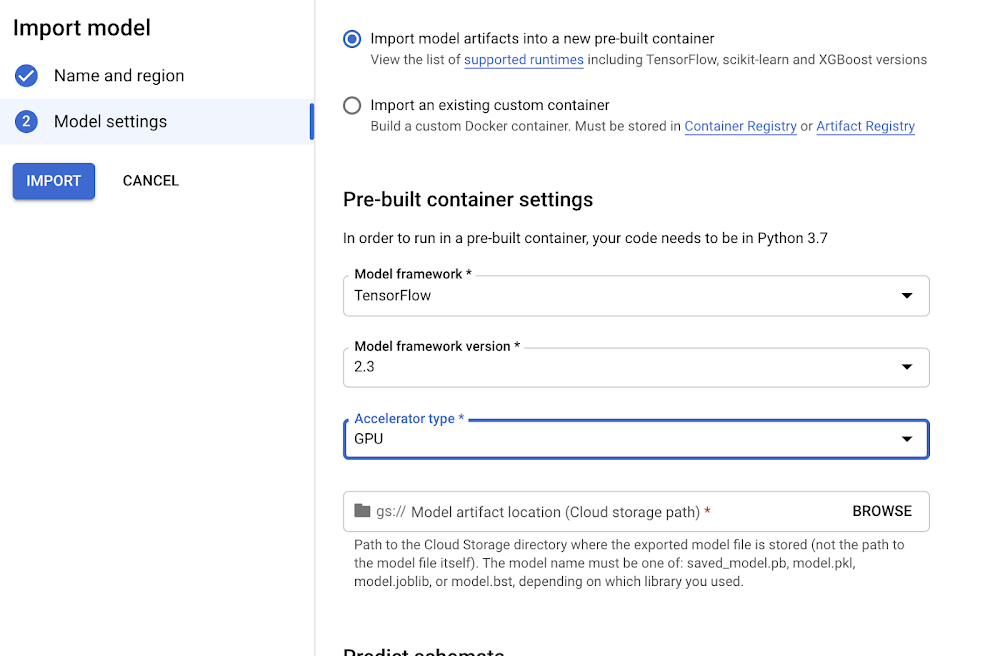

three. Select a appropriate model of TensorFlow to make use of together with your mannequin (for newer fashions, >= 2.zero ought to work). Choose “GPU” if you wish to pay for GPUs to hurry up prediction time:

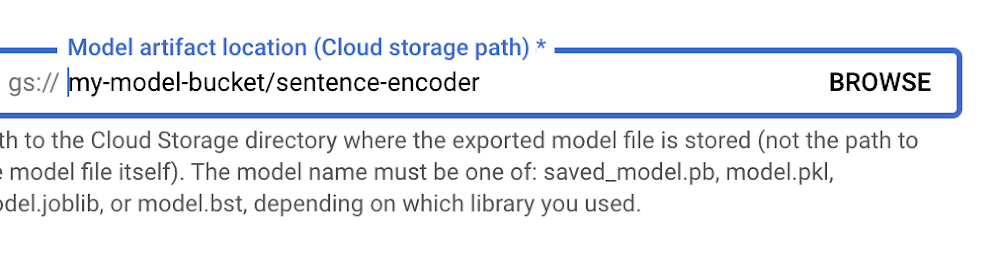

four. Level “Mannequin artifact location” to the mannequin folder you uploaded to Cloud Storage:

5. Click on “Import.”

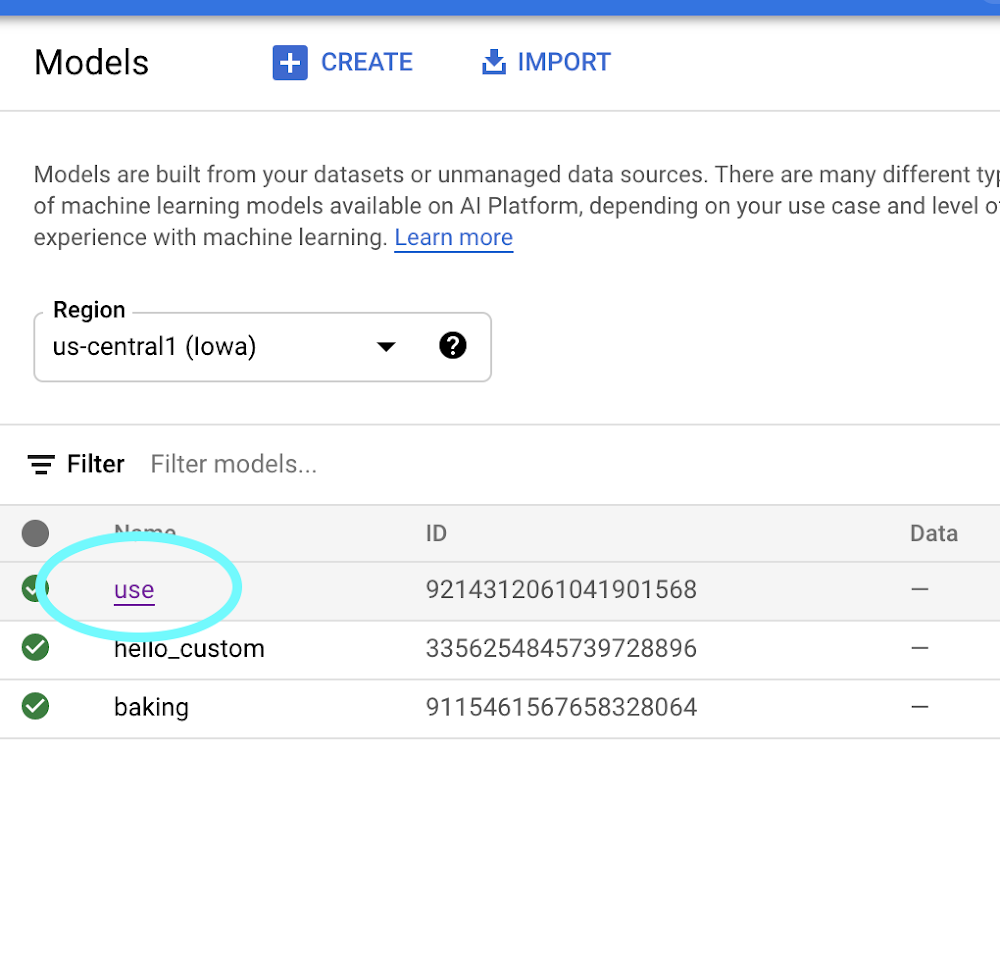

6. As soon as your mannequin is imported, you’ll have the ability to attempt it out straight from the fashions tab. Click on on the identify of your mannequin:

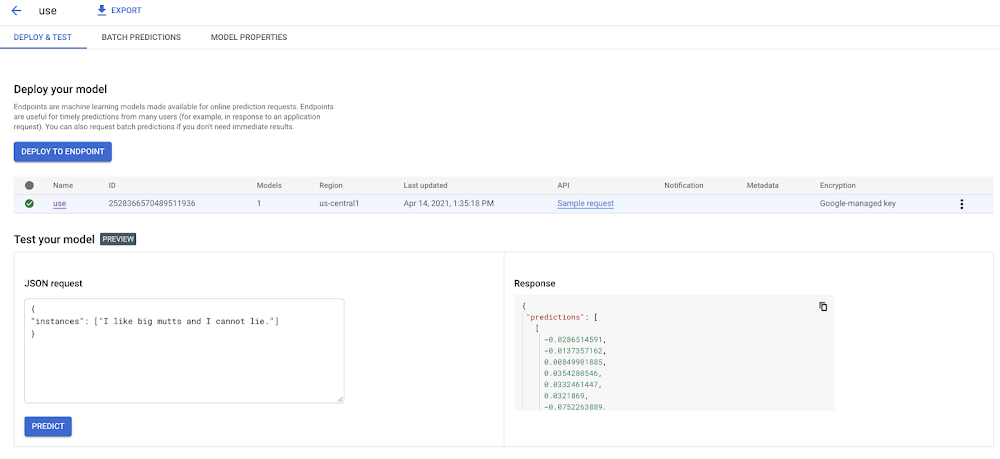

7. Right here within the mannequin web page, you may check your mannequin proper from the UI. Keep in mind how we inspected our mannequin with the saved_model_cli earlier and discovered it accepted as enter an array of strings? Right here’s how we will name the mannequin with that enter:

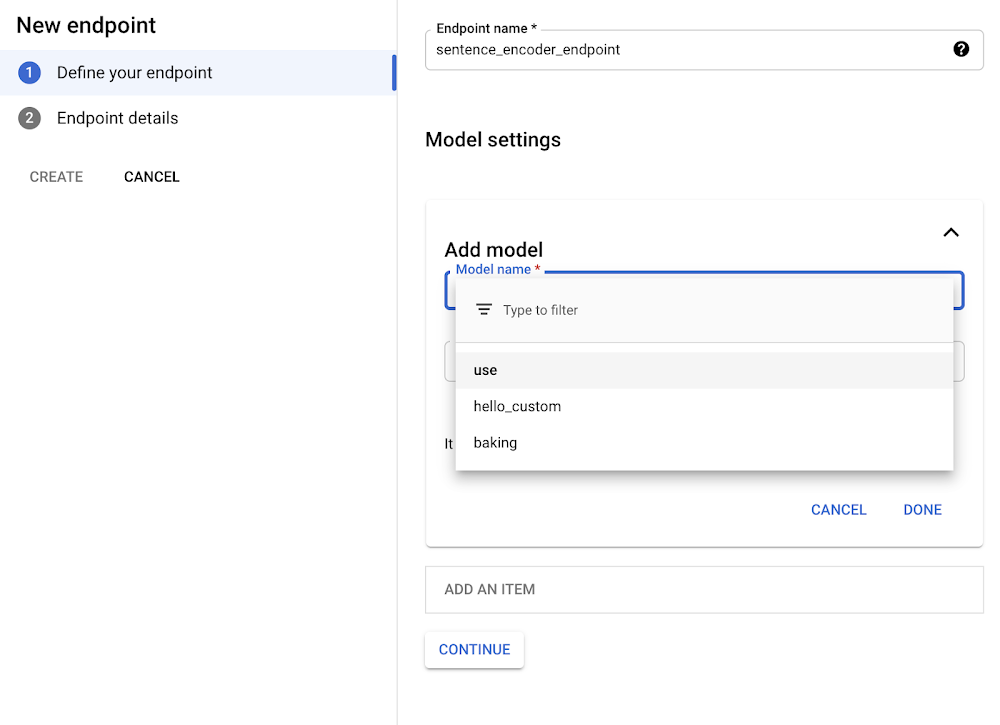

eight. When you’ve verified your mannequin works within the UI, you’ll need to deploy it to an endpoint so you may name it out of your app. Within the “Endpoint” tab, click on “Create Endpoint” and choose the mannequin you simply imported:

9. Voila! Your TensorFlow Hub mannequin is deployed and prepared for use. You may name it by way of POST request from any internet consumer or utilizing the Python consumer library:

Now that we’ve set our TensorFlow Hub mannequin on Vertex, we will use it in our app with out having to consider (most of) the efficiency and ops challenges of utilizing huge machine studying fashions in manufacturing. It’s a pleasant serverless strategy to get constructing with AI quick. Glad hacking!

[ad_2]

Source link